Advertisement

1:11:40

1:11:40

Stanford CS25: V2 I Introduction to Transformers w/ Andrej Karpathy

Stanford Online

·

May 10, 2026

Open on YouTube

Transcript

0:05

Hi, everyone.

0:06

Welcome to CS 25

Transformers United V2.

0:09

This was a course that

was held at Stanford

0:11

in the winter of 2023.

0:13

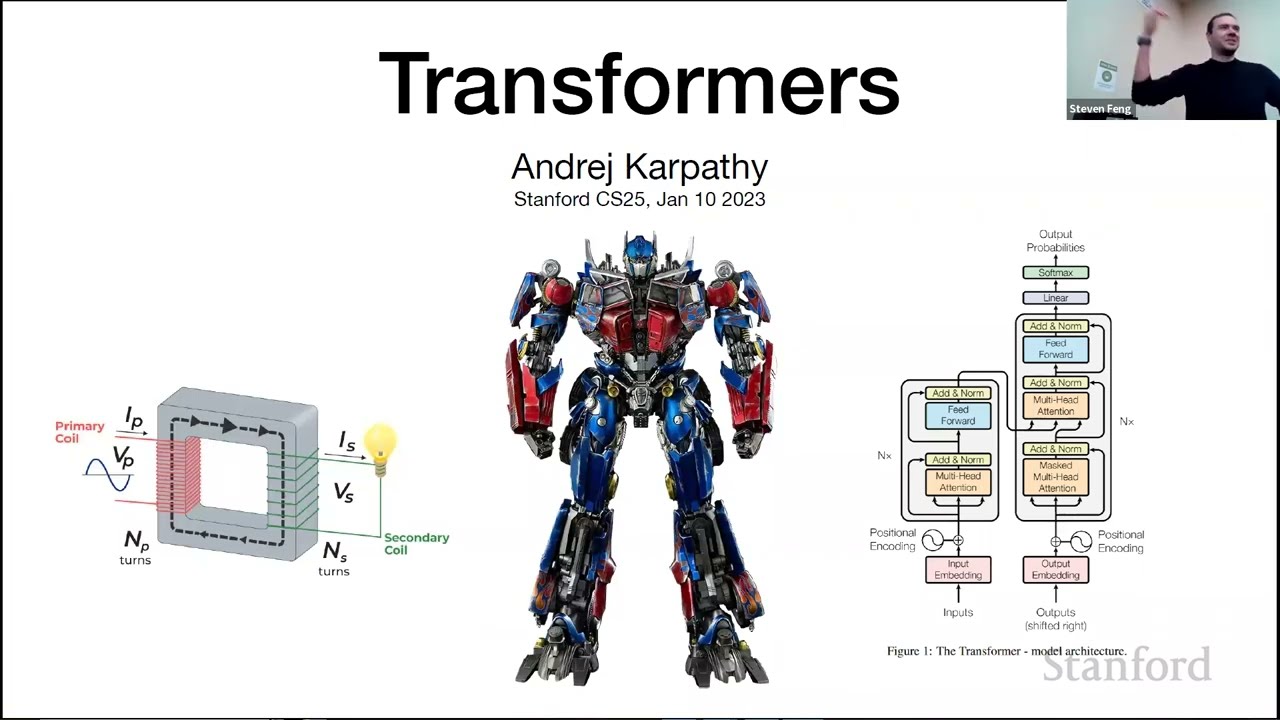

This course is not

about robots that

0:14

can transform into cars as

this picture might suggest.

0:17

Rather, it's about

deep learning models

0:18

that have taken

the world by storm

Advertisement

0:21

and have revolutionized

the field of AI and others.

0:23

Starting from natural

language processing,

0:25

transformers have

been applied all over,

0:27

computer vision, reinforcement

learning, biology, robotics,

0:30

et cetera.

0:31

We have an exciting set

of videos lined up for you

0:34

with some truly fascinating

speakers, talks, presenting

0:37

how they're applying

transformers

0:39

to the research in

different fields and areas.

0:44

We hope you'll enjoy and

learn from these videos.

Advertisement

0:47

So without any further

ado, let's get started.

0:52

This is a purely

introductory lecture.

0:54

And we'll go into the building

blocks of transformers.

0:58

So first, let's start with

introducing the instructors.

1:03

So for me, I'm currently on a

temporary deferral from the PhD

1:06

program, and I'm leading AI at a

robotics startup, Collaborative

1:09

Robotics, that are working on

some general purpose robots,

1:13

somewhat like [INAUDIBLE].

1:14

And I'm very passionate about

robotics and building FSG

1:18

learning algorithms.

1:19

My research interests are

in reinforcement learning,

1:21

computer vision, and

remodeling, and I

1:23

have a bunch of

publications in robotics,

1:25

autonomous driving,

and other areas.

1:28

My undergrad was at Cornell.

1:29

If someone is from Cornell,

so nice to [INAUDIBLE]..

1:33

So I'm Stephen, currently

a first-year CS PhD here.

1:37

Previously did my master's at

CMU and undergrad at Waterloo.

1:40

I'm mainly into NLP research,

anything involving language

1:43

and text, but more

recently, I've

1:45

been getting more into computer

vision as well as [INAUDIBLE]

1:48

And just some stuff I do

for fun, a lot of music

1:51

stuff, mainly piano.

1:52

Some self-promo of what I post

a lot on my Insta, YouTube,

1:55

and TikTok, so if you

guys want to check it out.

1:58

My friends and I are also

starting a Stanford piano club,

2:01

so if anybody's interested,

feel free to email

2:04

or DM me for details.

2:07

Other than that, martial arts,

bodybuilding, and huge fan

2:11

of k-dramas, anime,

and occasional gamer.

2:14

[LAUGHS]

2:18

OK, cool.

2:19

Yeah, so my name is Rylan.

2:20

Instead of talking

about myself, I just

2:21

want to very briefly

say that I'm super

2:23

excited to take this class.

2:24

I took it the last time--

sorry-- to teach this.

2:26

Excuse me.

2:26

I took it the last

time I was offered.

2:28

I had a bunch of fun.

2:30

I thought we brought in a

really great group of speakers

2:32

last time.

2:33

I'm super excited

for this offering.

2:35

And yeah, I'm thankful

that you're all here,

2:37

and I'm looking forward to a

really fun quarter together.

2:39

Thank you.

2:39

Yeah, so fun fact, Rylan was

the most outspoken student

2:42

last year.

2:43

And so if someone wants to

become an instructor next year,

2:45

you know what to do.

2:46

[LAUGHTER]

2:50

OK, cool.

2:53

Let's see.

2:54

OK, I think we

have a few minutes.

2:56

So what we hope you will learn

in this class is, first of all,

2:59

how do transformers

work, how they

3:02

are being applied,

just beyond NLP,

3:04

and nowadays, like they

are pretty [INAUDIBLE]

3:06

them everywhere in

AI machine learning.

3:10

And what are some new and

interesting directions

3:12

of research in these topics.

3:17

Cool, so this class is

just an introductory.

3:19

So we're just talking about

the basics of transformers,

3:22

introducing them, talking about

the self-attention mechanism

3:24

on which they're founded.

3:26

And we'll do a deep dive

more on models like BERT

3:30

to GPT, stuff like that.

3:32

So with that, happy

to get started.

3:35

OK, so let me start with

presenting the attention

3:38

timeline.

3:40

Attention all started

with this one paper.

3:43

[INAUDIBLE] by

Vaswani et al in 2017.

3:46

That was the beginning

of transformers.

3:49

Before that, we had

the prehistoric error,

3:51

where we had models

like RNM, LSDMs,

3:55

and simple attention

mechanisms that didn't work

3:57

or [INAUDIBLE].

3:59

Starting 2017, we saw this

explosion of transformers

4:02

into NLP, where people started

using it for everything.

4:07

I even heard this

quote from Google.

4:08

It's like our performance

increased every time

4:10

we [INAUDIBLE]

4:11

[CHUCKLES]

4:15

For the [INAUDIBLE]

after 2018 to 2020,

4:17

we saw this explosion

of transformers

4:18

into other fields like vision,

a bunch of other stuff,

4:23

and like biology as a whole.

4:25

And in last year,

2021 was the start

4:28

of the generative era, where we

got a lot of genetic modeling,

4:31

started models like

Codex, GPT, DALL-E,

4:35

stable diffusions,

or a lot of things

4:37

happening in genetic modeling.

4:40

And we started scaling up in AI.

4:44

And now, the present.

4:45

So this is 2022 and

the startup in '23.

4:49

And now we have models

like ChatGPT, Whisperer,

4:53

a bunch of others.

4:54

And we're scaling onwards

without splitting up,

4:57

so that's great.

4:58

So that's the future.

5:01

So going more into this,

so once there were RNNs.

5:06

So we had Seq2Seq

models, LSTMs, GRU.

5:10

What worked there was that they

were good at encoding history,

5:13

but what did not work was they

didn't encode long sequences

5:17

and they were very bad

at encoding context.

5:21

So consider this example.

5:24

Consider trying to predict

the last word in the text,

5:27

"I grew up in France,

dot, dot, dot.

5:29

I speak fluent Dutch."

5:31

Here, you need to understand

the context for it

5:33

to predict French, and

attention mechanism

5:36

is very good at that, whereas

if they're just using LSDMs,

5:39

it doesn't here work that well.

5:42

Another thing transformers

are good at is,

5:46

more based on content, is

also context prediction

5:50

is like finding attention maps.

5:52

If I have something

like a word like it,

5:56

what noun does it correlate to.

5:57

And we can give a

property attention

6:01

on one of the

possible activations.

6:05

And this works better

than existing mechanisms.

6:10

OK, so where we were in 2021,

we were on the verge of takeoff.

6:16

We were starting to realize

the potential of transformers

6:18

in different fields.

6:20

We solved a lot of

long sequence problems

6:23

like protein folding,

AlphaFold, offline RL.

6:28

We started to see few-shots,

zero-shot generalization.

6:31

We saw multimodal

tasks and applications

6:34

like generating

images from language.

6:36

So that was DALL-E. And

it feels like [INAUDIBLE]..

6:43

And this was also a

talk on transformers

6:45

that you can watch on YouTube.

6:48

Yeah, cool.

6:51

And this is where we were

going from 2021 to 2022,

6:55

which is we have gone from

the version of [INAUDIBLE]

6:58

And now, we are seeing

unique applications

7:00

in audio generation,

art, music, storytelling.

7:03

We are starting to see

these new capabilities

7:05

like commonsense,

logical reasoning,

7:08

mathematical reasoning.

7:09

We are also able to now

get human enlightenment

7:12

and interaction.

7:13

They're able to use

reinforcement learning

7:15

and human feedback.

7:16

That's how ChatGPT is trained

to perform really good.

7:19

We have a lot of

mechanisms for controlling

7:21

toxicity bias and ethics now.

7:24

And there are a

lot of also, a lot

7:26

of developments in other

areas like diffusion models.

7:30

Cool.

7:33

So the future is a

spaceship, and we are all

7:35

excited about it.

7:39

And there's a lot

of more applications

7:40

that we can enable,

and it'll be great

7:44

if you can see

transformers also up there.

7:47

One big example is video

understanding and generation.

7:49

That is something that

everyone is interested in,

7:51

and I'm hoping we'll

see a lot of models

7:53

in this area this year,

also, finance, business.

7:59

I'll be very excited to

see GPT author a novel,

8:02

but we need to solve very

long sequence modeling.

8:04

And most transformer

models are still

8:07

limited to 4,000 tokens

or something like that.

8:09

So we need to make them

generalize much more

8:13

better on long sequences.

8:17

We also want to have

generalized agents

8:19

that can do a lot of multitask,

a multi-input predictions

8:27

like Gato.

8:28

And so I think we will

see more of that, too.

8:31

And finally, we also want

domain specific models.

8:37

So you might want

a GPT model, let's

8:39

put it like maybe your health.

8:41

So that could be like

a DoctorGPT model.

8:43

You might have a

LawyerGPT model that's

8:45

trained on only law data.

8:46

So currently, we have GPT models

that are trained on everything.

8:49

But we might start to see

more niche models that

8:51

are good at one task.

8:53

And we could have a

mixture of experts,

8:55

so it's like, you

can think this is a--

8:57

how you'd normally

consult an expert,

8:58

you'll have expert AI models.

9:00

And you can go to a different AI

model for your different needs.

9:05

There are still a lot

of missing ingredients

9:07

to make this all successful.

9:10

The first of all

is external memory.

9:12

We are already starting to

see this with the models

9:15

like ChatGPT, where the

inflections are short-lived.

9:18

There's no long-term

memory, and they

9:20

don't have ability

to remember or store

9:23

conversations for long-term.

9:25

And this is something

you want to fix.

9:29

Second is reducing the

computation complexity.

9:32

So attention mechanism is

quadratic over the sequence

9:36

length, which is slow.

9:37

And we want to reduce

it and make it faster.

9:42

Another thing we

want to do is we

9:44

want to enhance the

controllability of these models

9:46

like a lot of these

models can be stochastic.

9:48

And we want to be able to

control what sort of outputs

9:51

we get from them.

9:52

And you might have

experienced the ChatGPT,

9:54

if you just refresh, you get

different output each time.

9:56

But you might want to have

a mechanism that controls

9:59

what sort of things you get.

10:01

And finally, we want to align

our state of art language

10:04

models with how the

human brain works.

10:06

And we are seeing the

surge, but we still

10:09

need more research on seeing

how they can make more informed.

10:12

Thank you.

10:14

Great, hi.

10:16

Yes, I'm excited to be here.

10:18

I live very nearby, so I got

the invites to come to class.

10:21

And I was like, OK,

I'll just walk over.

10:23

But then I spent like

10 hours on the slides,

10:25

so it wasn't as simple.

10:28

So yeah, I'm going to

talk about transformers.

10:30

I'm going to skip the

first two over there.

10:32

I'm not going to

talk about those.

10:34

We'll talk about that one

just to simplify the lecture

10:36

since we don't have time.

10:39

OK, so I wanted to provide

a little bit of context

10:41

on why does this transformers

class even exist.

10:44

So a little bit of

historical context.

10:45

I feel like Bilbo over there.

10:47

I joined like telling

you guys about this.

10:50

I don't know if you guys

saw Lord of the Rings.

10:52

And basically, I joined AI in

roughly 2012, the full course,

10:56

so maybe a decade ago.

10:58

And back then, you

wouldn't even say

10:59

that you joined AI by the way.

11:00

That was like a dirty word.

11:02

Now, it's OK to talk

about, but back then, it

11:04

was not even deep learning.

11:05

It was machine learning.

11:06

That was the term we would

use if you were serious.

11:08

But now, now, AI is

OK to use, I think.

11:11

So basically, do

you even realize

11:13

how lucky you are

potentially entering

11:15

this area in roughly 2023?

11:17

So back then, in 2011 or so

when I was working specifically

11:20

on computer vision, your

pipeline's looked like this.

11:25

So you wanted to

classify some images,

11:28

you would go to a paper, and I

think this is representative.

11:30

You would have three pages

in the paper describing

11:32

all kinds of a zoo,

of kitchen sink,

11:34

of different kinds of

features, descriptors.

11:36

And you would go

to a poster session

11:38

and in computer

vision conference,

11:40

and everyone would have their

favorite feature descriptor

11:41

that they're proposing.

11:42

And it's totally

ridiculous, and you

11:44

would take notes on which

one you should incorporate

11:45

into your pipeline because

you would extract all of them,

11:48

and then you would

put an SVM on top.

11:49

So that's what you would do.

11:51

So there's two pages.

11:52

Make sure you get your

[? Spar ?] SIFT histograms,

11:54

your SSIMs, your color

histograms, textiles,

11:56

tiny images.

11:57

And don't forget the

geometry specific histograms.

11:59

All of them have basically

complicated code by themselves.

12:02

So you're collecting code from

everywhere and running it,

12:04

and it was a total nightmare.

12:06

So on top of that,

it also didn't work.

12:10

[LAUGHTER]

12:11

So this would be, I think,

it represents the prediction

12:14

from that time.

12:15

You would just get predictions

like this once in a while,

12:17

and you'd be like, you

just shrug your shoulders

12:19

like that just happens

once in a while.

12:20

Today, you would be

looking for a bug.

12:23

And worse than that,

every single chunk of AI

12:30

had their own completely

separate vocabulary

12:32

that they work with.

12:33

So if you go to NLP

papers, those papers

12:36

would be completely different.

12:38

So you're reading the NLP

paper, and you're like,

12:40

what is this part

of speech tagging,

12:42

morphological analysis,

and tactic parsing,

12:44

co-reference resolution?

12:46

What is MPBTKJ?

12:48

And you're confused.

12:49

So the vocabulary and everything

was completely different.

12:51

And you couldn't

read papers, I would

12:52

say, across different areas.

12:55

So now, that

changed a little bit

12:56

starting 2012 when Al Krizhevsky

and colleagues basically

13:02

demonstrated that if you

scale a large neural network

13:05

on large data set, you can

get very strong performance.

13:08

And so up till then, there was

a lot of focus on algorithms.

13:10

But this showed that actually

neural nets scale very well.

13:13

So you need to now worry

about compute and data,

13:15

and you can scale it up.

13:16

It works pretty well.

13:17

And then that recipe

actually did copy paste

13:19

across many areas of AI.

13:21

So we start to see neural

networks pop up everywhere

13:23

since 2012.

13:25

So we saw them in computer

vision, and NLP, and speech,

13:28

and translation in RL and so on.

13:30

So everyone started to use

the same kind of modeling

13:32

toolkit, modeling framework.

13:33

And now when you go to NLP, and

you start reading papers there,

13:36

in machine translation,

for example,

13:38

this is a sequence

to sequence paper

13:40

which we'll come

back to in a bit.

13:41

You start to read those

papers, and you're like, OK,

13:44

I can recognize these words.

13:45

Like there's a neural network.

13:46

There's some parameters.

13:47

There's an optimizer, and

it starts to read things

13:50

that you know of.

13:50

So that decreased tremendously

the barrier to entry

13:54

across the different areas.

13:56

And then, I think,

the big deal is

13:57

that when the transformer

came out in 2017,

14:00

it's not even that just the tool

kits and the neural networks

14:02

were similar-- there's that

literally the architectures

14:05

converged to like one

architecture that you

14:07

copy paste across

everything seemingly.

14:10

So this was kind of an

unassuming machine translation

14:12

paper at the time, proposing

to transformer architecture.

14:15

But what we found since then

is that you can just basically

14:17

copy paste this architecture

and use it everywhere.

14:21

And what's changing is

the details of the data,

14:23

and the chunking of the

data, and how you feed it in.

14:26

And that's a

caricature, but it's

14:28

kind of like a correct

first order statement.

14:29

And so now, papers are

even more similar looking

14:32

because everyone's

just using transformer.

14:34

And so this convergence

was remarkable to watch

14:38

and unfolded over

the last decade.

14:40

And it's pretty crazy to me.

14:42

What I find

interesting is I think

14:44

this is some kind of a hint

that we're maybe converging

14:46

to something that maybe

the brain is doing

14:48

because the brain is very

homogeneous and uniform

14:50

across the entire

sheet of your cortex.

14:52

And OK, maybe some of

the details are changing,

14:54

but those feel like

hyperparameters

14:56

like a transformer.

14:57

But your auditory cortex

and your visual cortex

14:59

and everything else

looks very similar.

15:01

And so maybe we're

converging to some kind

15:02

of a uniform powerful

learning algorithm here.

15:06

Something like that, I think,

is interesting and exciting.

15:09

OK, so I want to talk about

where the transformer came

15:11

from briefly, historically.

15:12

So I want to start in 2003.

15:15

I like this paper quite a bit.

15:17

It was the first popular

application of neural networks

15:21

to the problem of

language modeling,

15:22

so predicting in this

case, the next word

15:24

in the sequence, which

allows you to build

15:26

generative models over text.

15:27

And in this case, they were

using multi-layer perceptron,

15:29

so very simple neural net.

15:30

The neural nets took three words

and predicted the probability

15:33

distribution for the

fourth word in a sequence.

15:36

So this was well and

good at this point.

15:39

Now, over time, people

started to apply this

15:41

to machine translation.

15:43

So that brings us to

sequence to sequence paper

15:45

from 2014 that was

pretty influential,

15:48

and the big problem

here was OK, we

15:49

don't just want to take three

words and predict the fourth.

15:52

We want to predict how to

go from an English sentence

15:55

to a French sentence.

15:56

And the key problem

was OK, you can

15:58

have arbitrary number of words

in English and arbitrary number

16:00

of words in French,

so how do you

16:03

get an architecture

that can process

16:04

this variably sized input?

16:06

And so here they used a

LSDM, and there's basically

16:10

two chunks of this, which are

covered by the slack, by this.

16:16

But basically have an

encoder LSDM on the left,

16:19

and it just consumes

one word at a time

16:22

and builds up a context

of what it has read.

16:24

And then that acts as

a conditioning vector

16:26

to the decoder RNN or LSDM.

16:29

That basically

goes chonk, chonk,

16:30

chonk for the next

word in a sequence,

16:32

translating the English to

French or something like that.

16:35

Now, the big problem with

this, that people identified,

16:37

I think, very quickly

and tried to resolve

16:40

is that there's what's called

this encoder bottleneck.

16:43

So this entire English sentence

that we are trying to condition

16:46

on is packed into

a single vector

16:48

that goes from the

encoder to the decoder.

16:50

And so this is just

too much information

16:52

to potentially maintain

in a single vector,

16:54

and that didn't seem correct.

16:55

And so people who are

looking around for ways

16:57

to alleviate the attention of

the encoder bottleneck as it

17:00

was called at the time.

17:02

And so that brings

us to this paper,

17:03

Neural Machine Translation

by Jointly Learning

17:05

to Align and Translate.

17:07

And here, just quoting from

the abstract, "in this paper,

17:11

we conjectured that the use

of a fixed length vector

17:13

is a bottleneck in

improving the performance

17:15

of the basic

encoder-decoder architecture

17:17

and propose to extend

this by allowing

17:19

the model to

automatically soft search

17:21

for parts of the source sentence

that are relevant to predicting

17:24

a target word without

having to form

17:28

these parts or hard

segments exclusively."

17:30

So this was a way to look

back to the words that

17:34

are coming from the encoder.

17:35

And it was achieved

using this soft search.

17:38

So as you are

decoding in the words

17:42

here, while you

are decoding them,

17:44

you are allowed to

look back at the words

17:45

at the encoder via this soft

attention mechanism proposed

17:49

in this paper.

17:50

And so this paper, I think,

is the first time that I saw,

17:52

basically, attention.

17:55

So your context vector

that comes from the encoder

17:58

is a weighted sum

of the hidden states

18:01

of the words in the encoding.

18:05

And then the weights

of this sum come

18:07

from a softmax that is based

on these compatibilities

18:10

between the current

state as you're decoding

18:13

and the hidden states

generated by the encoder.

18:15

And so this is the first

time that really you

18:17

start to look at it, and this

is the current modern equations

18:22

of the attention.

18:23

And I think this was the

first paper that I saw it in.

18:25

It's the first time

that there's a word

18:27

attention used, as far as I

know, to call this mechanism.

18:32

So I actually tried to dig

into the details of the history

18:34

of the attention.

18:35

So the first author

here, Dzmitry, I

18:38

had an email

correspondence with him,

18:40

and I basically

sent him an email.

18:41

I'm like, Dzmitry, this

is really interesting.

18:43

Just rumors have taken over.

18:44

Where did you come up

with the soft attention

18:45

mechanism that ends up being

the heart of the transformer?

18:48

And to my surprise, he wrote me

back this massive email, which

18:52

was really fascinating.

18:52

So this is an excerpt

from that email.

18:57

So basically, he talks about

how he was looking for a way

18:59

to avoid this bottleneck

between the encoder and decoder.

19:02

He had some ideas

about cursors that

19:04

traverse the sequences

that didn't quite work out.

19:06

And then here, "so one

day, I had this thought

19:08

that it would be nice

to enable the decoder

19:10

RNN to learn to search where

to put the cursor in the source

19:13

sequence.

19:14

This was sort of inspired

by translation exercises

19:16

that learning English in

my middle school involved.

19:21

Your gaze shifts back and forth

between source and target,

19:23

sequence as you translate."

19:24

So literally, I thought that

this was kind of interesting,

19:27

that he's not a native

English speaker,

19:28

and here, that gave him an edge

in this machine translation

19:31

that led to attention and

then led to transformer.

19:34

So that's really fascinating.

19:37

"I expressed a soft

search a softmax

19:38

and then weighted averaging

of the [INAUDIBLE] states.

19:40

And basically, to

my great excitement,

19:43

this worked from

the very first try."

19:45

So really, I think,

interesting piece of history.

19:48

And as it later turned out

that the name of RNN search

19:51

was kind of lame, so the

better name attention came

19:54

from Yoshua on one

of the final passes

19:57

as they went over the paper.

19:58

So maybe Attention

is All You Need

20:00

would have been called RNN

Search is All You Need,

20:03

but we have Yoshua

Bengio to thank

20:05

for a little bit of

better name, I would say.

20:07

So apparently,

that's the history

20:08

of this, which I

thought was interesting.

20:11

OK, so that brings us to

2017, which is Attention

20:13

is All You Need.

20:14

So this attention

component, which

20:16

in Dzmitry's paper was

just one small segment,

20:19

and there's all this

bidirectional RNN, RNN

20:21

and decoder, and this Attention

All You Need paper is saying,

20:25

OK, you can actually

delete everything.

20:26

What's making this

work very well

20:28

is just attention by itself.

20:29

And so delete everything,

keep attention.

20:32

And then what's remarkable about

this paper actually is usually,

20:35

you see papers that

are very incremental.

20:36

They add one thing, and

they show that it's better.

20:39

But I feel like

Attention is All You

20:41

Need was like a mix of multiple

things at the same time.

20:44

They were combined

in a very unique way,

20:46

and then also achieve a

very good local minimum

20:49

in the architecture space.

20:50

And so to me, this is

really a landmark paper

20:52

that is quite

remarkable and, I think,

20:55

had quite a lot of

work behind the scenes.

20:58

So delete all the RNN,

just keep attention.

21:01

Because attention

operates over sets--

21:03

and I'm going to go

to this in a second--

21:05

you now need to positionally

encode your inputs

21:07

because attention doesn't have

the notion of space by itself.

21:14

I have to be very careful.

21:17

They adopted this

residual network structure

21:19

from resonance.

21:21

They interspersed attention

with multi-layer perceptrons.

21:24

They used layer norms, which

came from a different paper.

21:27

They introduced the concept

of multiple heads of attention

21:29

that were applied in parallel.

21:30

And they gave us, I think,

like a fairly good set

21:33

of hyperparameters that

to this day are used.

21:35

So the expansion factor in the

multi-layer perceptron goes up

21:39

by 4X--

21:40

and we'll go into

a bit more detail--

21:41

and this 4X has stuck around.

21:43

And I believe there's

a number of papers

21:44

that try to play with all

kinds of little details

21:47

of the transformer, and nothing

sticks because this is actually

21:50

quite good.

21:51

The only thing to my

knowledge that didn't stick

21:54

was this reshuffling

of the layer norms

21:56

to go into the prenorm

version where here you

21:59

see the layer norms are after

the multiheaded attention feed

22:01

forward.

22:02

They just put them

before instead.

22:04

So just reshuffling of

layer norms, but otherwise,

22:06

the TPTs and everything else

that you're seeing today

22:08

is basically the 2017

architecture from 5 years ago.

22:11

And even though everyone

is working on it,

22:13

it's been proven

remarkably resilient,

22:15

which I think is

real interesting.

22:17

There are innovations

that, I think,

22:18

have been adopted also

in positional encoding.

22:21

It's more common to use

different rotary and relative

22:24

positional encoding and so on.

22:25

So I think there have been

changes, but for the most part,

22:28

it's proven very resilient.

22:31

So really quite an

interesting paper.

22:32

Now, I wanted to go into

the attention mechanism.

22:36

And I think, the way I interpret

it is not similar to the ways

22:43

that I've seen it

presented before.

22:44

So let me try a different

way of how I see it.

22:47

Basically, to me, attention is

kind of like the communication

22:49

phase of the transformer,

and the transformer

22:52

interweaves two phases of the

communication phase, which

22:55

is the multi-headed

attention, and the computation

22:57

stage, which is this

multilayered perceptron

23:00

or [INAUDIBLE].

23:01

So in the communication

phase, it's

23:03

really just a data

dependent message

23:05

passing on directed graphs.

23:07

And you can think of it

as OK, forget everything

23:09

with machine

translation, everything.

23:10

Let's just-- we have

directed graphs.

23:13

At each node, you

are storing a vector.

23:16

And then let me talk now

about the communication

23:18

phase of how these

vectors talk to each other

23:20

and this directed graph.

23:21

And then the compute

phase later is just

23:23

a multi-perceptron, which then

basically acts on every node

23:27

individually.

23:28

But how do these nodes

talk to each other

23:30

in this directed graph?

23:32

So I wrote like

some simple Python--

23:36

I wrote this in Python

basically to create

23:39

one round of communication

of using attention

23:44

as the message passing scheme.

23:46

So here, a node has this

private data vector,

23:51

as you can think of it

as private information

23:53

to this node.

23:54

And then it can also emit a

key, a query, and a value.

23:57

And simply, that's done

by linear transformation

24:00

from this node.

24:01

So the key is what are

the things that I am--

24:07

sorry.

24:07

The query is what are the

things that I'm looking for?

24:10

The key is what other

the things that I have?

24:12

And the value is what are the

things that I will communicate?

24:15

And so then when you

have your graph that's

24:16

made up of nodes in some

random edges, when you actually

24:19

have these nodes communicating,

what's happening is

24:21

you loop over all the

nodes individually

24:23

in some random order,

and you're at some node,

24:27

and you get the

query vector q, which

24:29

is, I'm a node in

some graph, and this

24:32

is what I'm looking for.

24:33

And so that's just achieved

via this linear transformation

24:36

here.

24:36

And then we look at all the

inputs that point to this node,

24:39

and then they broadcast what

are the things that I have,

24:42

which is their keys.

24:44

So they broadcast the keys.

24:45

I have the query, then those

interact by dot product

24:49

to get scores.

24:51

So basically, simply

by doing dot product,

24:53

you get some

unnormalized weighting

24:55

of the interestingness of all

of the information in the nodes

24:59

that point to me and to

the things I'm looking for.

25:02

And then when you normalize

that with softmax,

25:03

so it just sums to

1, you basically just

25:06

end up using those scores, which

now sum to 1 in our probability

25:09

distribution, and you do a

weighted sum of the values

25:13

to get your update.

25:15

So I have a query.

25:17

They have keys, dot products

to get interestingness or like

25:21

affinity, softmax to

normalize it, and then

25:24

weighted sum of those values

flow to me and update me.

25:27

And this is happening for

each node individually.

25:29

And then we update at the end.

25:30

And so this kind of a

message passing scheme

25:32

is at the heart of

the transformer.

25:35

And it happens in the more

vectorized batched way

25:40

that is more confusing and is

also interspersed with layer

25:44

norms and things like that

to make the training behave

25:46

better.

25:47

But that's roughly what's

happening in the attention

25:49

mechanism, I think,

on a high level.

25:53

So yeah, so in the communication

phase of the transformer, then

25:59

this message passing

scheme happens

26:00

in every head in parallel and

then in every layer in series

26:06

and with different

weights each time.

26:08

And that's it as far as the

multi-headed attention goes.

26:13

And so if you look at these

encooder-decoder models,

26:15

you can think of it then in

terms of the connectivity

26:18

of these nodes in the graph.

26:19

You can think of it as like,

OK, all these tokens that

26:21

are in the encoder that

we want to condition on,

26:23

they are fully

connected to each other.

26:25

So when they communicate,

they communicate fully

26:28

when you calculate

their features.

26:30

But in the decoder,

because we are

26:32

trying to have a

language model, we

26:33

don't want to have

communication for future tokens

26:35

because they give away

the answer at this step.

26:38

So the tokens in the

decoder are fully connected

26:40

from all the encoder

states, and then they

26:43

are also fully connected from

everything that is decoding.

26:46

And so you end up with

this triangular structure

26:49

in the data graph.

26:50

But that's the

message passing scheme

26:52

that this basically implements.

26:54

And then you have to be also

a little bit careful because

26:57

in the cross attention

here with the decoder,

26:59

you consume the features

from the top of the encoder.

27:01

So think of it as

in the encoder,

27:03

all the nodes are

looking at each other,

27:05

all the tokens are looking at

each other many, many times.

27:08

And they really figure

out what's in there,

27:09

and then the decoder when it's

looking only at the top nodes.

27:14

So that's roughly the

message passing scheme.

27:16

I was going to go into

more of an implementation

27:18

of a transformer.

27:19

I don't know if there's

any questions about this.

27:23

[INAUDIBLE] self-attention

and multi-headed attention,

27:26

but what is the

advantage of [INAUDIBLE]??

27:30

Yeah, so self-attention and

multi-headed attention, so

27:35

the multi-headed attention is

just this attention scheme,

27:38

but it's just applied

multiple times in parallel.

27:40

Multiple heads just means

independent applications

27:42

of the same attention.

27:44

So this message passing

scheme basically just

27:47

happens in parallel

multiple times

27:49

with different weights for

the query, key, and value.

27:52

So you can almost look at

it like in parallel, I'm

27:55

looking for, I'm seeking

different kinds of information

27:57

from different nodes.

27:59

And I'm collecting it

all in the same node.

28:01

It's all done in parallel.

28:03

So heads is really just

copy-paste in parallel.

28:06

And layers are

copy-paste but in series.

28:12

Maybe that makes sense.

28:15

And self-attention, when

it's self-attention,

28:18

what it's referring to

is that the node here

28:21

produces each node here.

28:23

So as I described it here,

this is really self-attention

28:25

because every one of

these nodes produces

28:27

a key query and a value

from this individual node.

28:30

When you have cross-attention,

you have one cross-attention

28:33

here, coming from the encoder.

28:36

That just means that

the queries are still

28:38

produced from this node,

but the keys and the values

28:42

are produced as a

function of nodes that

28:44

are coming from the encoder.

28:48

So I have my queries because

I'm trying to decode some--

28:52

the fifth word in the sequence.

28:53

And I'm looking

for certain things

28:55

because I'm the fifth word.

28:56

And then the keys and

the values in terms

28:58

of the source of information

that could answer my queries

29:01

can come from the previous

nodes in the current decoding

29:04

sequence or from the

top of the encoder.

29:06

So all the nodes that

have already seen all

29:09

of the encoding tokens many,

many times cannot broadcast

29:12

what they contain in

terms of information.

29:14

So I guess, to summarize,

the self-attention is--

29:18

sorry, cross-attention

and self-attention

29:20

only differ in where the piece

and the values come from.

29:24

Either the keys and values

are produced from this node,

29:28

or they are produced from some

external source like an encoder

29:31

and the nodes over there.

29:33

But algorithmically, is the

same mathematical operations.

29:39

Question.

29:39

Yeah, OK.

29:40

So two questions for you.

29:41

First question is, in the

message passing [INAUDIBLE]

29:56

So think of-- so each one

of these nodes is a token.

30:04

I guess they don't have

a very good picture of it

30:06

in the transformer.

30:06

But this node here could

represent the third word

30:14

in the output in the decoder,

and in the beginning,

30:19

it is just the

embedding of the word.

30:27

And then, OK, I have to

think through this analogy

30:30

a little bit more.

30:31

I came up with it this morning.

30:32

[LAUGHTER]

30:34

[INAUDIBLE]

30:39

What example of instantiation

[INAUDIBLE] nodes

30:45

as in in blocks were embedding?

30:50

These nodes are

basically the vectors.

30:53

I'll go to an implementation.

30:54

I'll go to the implementation,

and then maybe I'll

30:56

make the connections

to the graph.

30:58

So let me try to first

go to-- let me now go to,

31:01

with this intuition

in mind, at least,

31:03

to a nanoGPT, which is a

concrete implementation

31:05

of a transformer

that is very minimal.

31:06

So I worked on this

over the last few days,

31:08

and here it is reproducing

GPT-2 on open web text.

31:11

So it's a pretty serious

implementation that reproduces

31:14

GPT-2, I would say, and

provide it enough compute--

31:17

This was one node of 8 GPUs

for 38 hours or something

31:21

like that, if I

remember correctly.

31:22

And it's very readable.

31:23

It's 300 lines, so everyone

can take a look at it.

31:27

And yeah, let me basically

briefly step through it.

31:30

So let's try to have a

decoder-only transformer.

31:34

So what that means is that

it's a language model.

31:36

It tries to model the

next word in the sequence

31:39

or the next character

in the sequence.

31:41

So the data that

we train on this

31:43

is always some kind of text.

31:44

So here's some fake Shakespeare.

31:45

Sorry, this is real Shakespeare.

31:47

We're going to produce

fake Shakespeare.

31:48

So this is called

a Tiny Shakespeare

31:50

dataset, which is one of

my favorite toy datasets.

31:52

You take all of

Shakespeare, concatenate it,

31:54

and it's 1 megabyte

file, and then

31:55

you can train

language models on it

31:56

and get infinite

Shakespeare, if you like,

31:58

which I think is kind of cool.

31:59

So we have a text.

32:00

The first thing we

need to do is we

32:02

need to convert it to

a sequence of integers

32:05

because transformers

natively process--

32:09

you can't plug text

into transformer.

32:10

You need to somehow encode it.

32:11

So the way that

encoding is done is

32:13

we convert, for example,

in the simplest case,

32:15

every character gets an

integer, and then instead of "hi

32:18

there," we would have

this sequence of integers.

32:21

So then you can encode every

single character as an integer

32:25

and get a massive

sequence of integers.

32:27

You just concatenate

it all into one

32:29

large, long

one-dimensional sequence.

32:31

And then you can train on it.

32:32

Now, here, we only

have a single document.

32:34

In some cases, if you have

multiple independent documents,

32:36

what people like to do

is create special tokens,

32:38

and they intersperse

those documents

32:40

with those special

end of text tokens

32:42

that they splice in between

to create boundaries.

32:46

But those boundaries actually

don't have any modeling impact.

32:50

It's just that the

transformer is supposed

32:52

to learn via backpropagation

that the end of document

32:55

sequence means that you

should wipe the memory.

33:00

OK, so then we produce batches.

33:02

So these batches

of data just mean

33:04

that we go back to the

one-dimensional sequence,

33:06

and we take out chunks

of this sequence.

33:08

So say, if the block size is 8,

Then the block size indicates

33:13

the maximum length of context

that your transformer will

33:17

process.

33:18

So if our block size

is 8, that means

33:20

that we are going to have up

to eight characters of context

33:23

to predict the ninth

character in a sequence.

33:26

And the batch size indicates

how many sequences in parallel

33:29

we're going to process.

33:30

And we want this to be

as large as possible,

33:31

so we're fully taking

advantage of the GPU

33:33

and the parallels [INAUDIBLE]

So in this example,

33:36

we're doing a 4 by 8 batches.

33:38

So every row here is

independent example

33:41

and then every row here is a

small chunk of the sequence

33:47

that we're going to train on.

33:48

And then we have both the

inputs and the targets

33:50

at every single point here.

33:52

So to fully spell out what's

contained in a single 4

33:55

by 8 batch to the transformer--

33:57

I sort of compact it here--

33:59

so when the input is 47, by

itself, the target is 58.

34:04

And when the input is

the sequence 47, 58,

34:07

the target is one.

34:08

And when it's 47, 58, 1,

the target is 51 and so on.

34:13

So actually, the single batch

of examples that score by 8

34:15

actually has a ton of

individual examples

34:17

that we are expecting

a transformer

34:18

to learn on in parallel.

34:21

And so you'll see that

the batches are learned

34:23

on completely independently, but

the time dimension here along

34:28

horizontally is also

trained on in parallel.

34:30

So your real batch size

is more like B times T.

34:34

And it's just that the

context grows linearly

34:37

for the predictions that you

make along the T direction

34:41

in the model.

34:42

So this is all the examples

that the model will learn from,

34:45

this single batch.

34:48

So now, this is the GPT class.

34:52

And because this is

a decoder-only model,

34:55

so we're not going to have

an encoder because there's no

34:58

English we're translating from--

34:59

we're not trying to condition

in some other external

35:02

information.

35:02

We're just trying to produce

a sequence of words that

35:05

follow each other or likely to.

35:08

So this is all PyTorch, and

I'm going slightly faster

35:10

because I'm assuming people

have taken 231 or something

35:12

along those lines.

35:15

But here in the forward

pass, we take these indices,

35:19

and then we both encode the

identity of the indices,

35:24

just via an embedding

lookup table.

35:26

So every single integer, we

index into a lookup table of

35:31

vectors in this, and end

up embedding, and pull out

35:34

the word vector for that token.

35:38

And then because the

transformer by itself

35:41

doesn't actually-- the

process is set natively.

35:43

So we need to also positionally

encode these vectors

35:45

so that we basically

have both the information

35:47

about the token identity and

its place in the sequence from 1

35:51

to block size.

35:53

Now, the information

about what and where

35:56

is combined additively,

so the token embeddings

35:58

and the positional embeddings

are just added exactly as here.

36:02

So then there's

optional dropout,

36:06

this x here basically

just contains

36:08

the set of words

and their positions,

36:14

and that feeds into the

blocks of transformer.

36:16

And we're going to look

into what's block here.

36:18

But for here, for now,

this is just a series

36:20

of blocks in a transformer.

36:22

And then in the end,

there's a layer norm,

36:23

and then you're

decoding the logits

36:26

for the next word or next

integer in a sequence,

36:30

using the linear projection of

the output of this transformer

36:33

So LM head here, a short

core language model head.

36:36

It's just a linear function.

36:38

So basically, positionally

encode all the words,

36:42

feed them into a

sequence of blocks,

36:45

and then apply a linear

layer to get the probability

36:47

distribution for

the next character.

36:50

And then if we have

the targets, which

36:51

we produced in the data order--

36:54

and you'll notice that

the targets are just

36:55

the inputs offset

by one in time--

36:59

then those targets feed

into a cross entropy loss.

37:01

So this is just a

negative log likelihood

37:03

typical classification loss.

37:04

So now let's drill into

what's here in the blocks.

37:08

So these blocks that are

applied sequentially,

37:11

there's, again, as I

mentioned, this communicate

37:13

phase and the compute phase.

37:15

So in the communicate

phase, all the nodes

37:17

get to talk to each other, and

so these nodes are basically,

37:21

if our block size

is 8, then we are

37:23

going to have eight

nodes in this graph.

37:26

There's eight nodes

in this graph.

37:28

The first node is pointed

to only by itself.

37:30

The second node is pointed to

by the first node and itself.

37:33

The third node is pointed

to by the first two nodes

37:35

and itself, et cetera.

37:36

So there's eight nodes here.

37:38

So you apply-- there's a

residual pathway and x.

37:42

You take it out.

37:43

You apply a layer norm,

and then the self-attention

37:45

so that these communicate,

these eight nodes communicate.

37:47

But you have to keep in

mind that the batch is 4.

37:50

So because batch is 4,

this is also applied--

37:54

so we have eight

nodes communicating,

37:55

but there's a batch of four of

them individually communicating

37:58

in one of those eight nodes.

37:59

There's no crisscross across

the batch dimension, of course.

38:02

There's no batch

anywhere luckily.

38:04

And then once they've

changed information,

38:06

they are processed using

the multi-layer perceptron.

38:09

And that's the compute phase.

38:12

And then also here we are

missing the cross-attention

38:18

because this is a

decoder-only model.

38:19

So all we have is

this step here,

38:21

the multi-headed

attention, and that's

38:22

this line, the

communicate phase.

38:24

And then we have the feed

forward, which is the MLP,

38:27

and that's the compute phase.

38:29

I'll take question's

a bit later.

38:31

Then the MLP here is

fairly straightforward.

38:34

The MLP is just individual

processing on each node,

38:38

just transforming the feature

representation at that node.

38:41

So applying a

two-layer neural net

38:45

with a GELU nonlinearity,

which is just

38:47

think of it as a ReLU

or something like that.

38:49

It's just a nonlinearity.

38:51

And then MLP is straightforward.

38:53

I don't think there's

anything too crazy there.

38:55

And then this is the

causal self-attention part,

38:57

the communication phase.

38:59

So this is like

the meat of things

39:01

and the most complicated part.

39:03

It's only complicated

because of the batching

39:06

and the implementation detail

of how you mask the connectivity

39:10

in the graph so that

you can't obtain

39:13

any information

from the future when

39:15

you're predicting your token.

39:16

Otherwise, it gives

away the information.

39:18

So if I'm the fifth token and

if I'm the fifth position,

39:23

then I'm getting the fourth

token coming into the input,

39:26

and I'm attending to the

third, second, and first,

39:29

and I'm trying to figure

out what is the next token.

39:32

Well then, in this batch,

in the next element

39:34

over in the time dimension,

the answer is at the input.

39:37

So I can't get any

information from there.

39:40

So that's why this

is all tricky,

39:41

but basically, in

the forward pass,

39:45

we are calculating the queries,

keys, and values based on x.

39:50

So these are the keys,

queries, and values.

39:52

Here, when I'm

computing the attention,

39:54

I have the queries matrix

multiplying the piece.

39:58

So this is the dot product in

parallel for all the queries

40:00

and all the keys

in all the heads.

40:03

So I failed to mention

that there's also

40:06

the aspect of the heads, which

is also done all in parallel

40:08

here.

40:09

So we have the batch

dimension, the time dimension,

40:10

and the head dimension,

and you end up

40:12

with five-dimensional tensors,

and it's all really confusing.

40:14

So I invite you to step through

it later and convince yourself

40:17

that this is actually

doing the right thing.

40:19

But basically, you have the

batch dimension, the head

40:21

dimension and the

time dimension,

40:23

and then you have

features at them.

40:25

And so this is evaluating for

all the batch elements, for all

40:28

the head elements, and

all the time elements,

40:31

the simple Python that I gave

you earlier, which is query

40:34

dot product p.

40:35

Then here, we do a masked_fill,

and what this is doing

40:38

is it's basically clamping the

attention between the nodes

40:44

that are not supposed to

communicate to be negative

40:46

infinity.

40:47

And we're doing

negative infinity

40:48

because we're about to softmax,

and so negative infinity will

40:51

make basically the attention

that those elements be zero.

40:54

And so here we are going

to basically end up

40:56

with the weights, the affinities

between these nodes, optional

41:03

dropout.

41:03

And then here, attention

matrix multiply v is basically

41:08

the gathering of the information

according to the affinities

41:10

we calculated.

41:11

And this is just a

weighted sum of the values

41:14

at all those nodes.

41:15

So this matrix multiplies

is doing that weighted sum.

41:19

And then transpose

contiguous view

41:20

because it's all

complicated and batched

41:22

in five-dimensional

tensors, but it's really not

41:24

doing anything,

optional drop out,

41:26

and then a linear projection

back to the residual pathway.

41:30

So this is implementing the

communication phase here.

41:34

Then you can train

this transformer.

41:37

And then you can generate

infinite Shakespeare.

41:41

And you will simply do this by--

41:43

because our block size is 8,

we start with a sum token,

41:47

say like, I used

in this case, you

41:50

can use something like a

new line as the start token.

41:53

And then you communicate

only to yourself

41:55

because there's a

single node, and you

41:57

get the probability

distribution for the first word

41:59

in the sequence.

42:00

And then you decode it

for the first character

42:03

in the sequence.

42:04

You decode the character.

42:05

And then you bring

back the character,

42:06

and you re-encode

it as an integer.

42:08

And now, you have

the second thing.

42:10

And so you get--

42:12

OK, we're at the first

position, and this

42:14

is whatever integer it is,

add the positional encodings,

42:17

goes into the sequence,

goes in the transformer,

42:19

and again, this token

now communicates

42:21

with the first token

and it's identity.

42:26

And so you just keep

plugging it back.

42:28

And once you run out of the

block size, which is eight,

42:31

you start to crawl,

because you can never

42:33

have watt size more than

eight in the way you've

42:34

trained this transformer.

42:35

So we have more and more

context until eight.

42:37

And then if you want to

generate beyond eight,

42:39

you have to start cropping

because the transformer only

42:41

works for eight elements

in time dimension.

42:43

And so all of these transformers

in the [INAUDIBLE] setting

42:47

have a finite block

size or context length,

42:50

and in typical models, this

will be 1,024 tokens or 2,048

42:54

tokens, something like that.

42:56

But these tokens are

usually like BPE tokens,

42:58

or SentencePiece tokens,

or WorkPiece tokens.

43:00

There's many

different encodings.

43:02

So it's not like that long.

43:03

And so that's why, I

think, [INAUDIBLE]..

43:05

We really want to

expand the context size,

43:06

and it gets gnarly

because the attention

43:08

is sporadic in the

[INAUDIBLE] case.

43:11

Now, if you want to implement

an encoder instead of a decoder

43:16

attention.

43:18

Then all you have to

do is this [INAUDIBLE]

43:21

and you just delete that line.

43:23

So if you don't

mask the attention,

43:25

then all the nodes

communicate to each other,

43:27

and everything is

allowed, and information

43:29

flows between all the nodes.

43:31

So if you want to have the

encoder here, just delete.

43:35

All the encoder blocks

will use attention

43:38

where this line is deleted.

43:39

That's it.

43:40

So you're allowing whatever--

this encoder might store say,

43:44

10 tokens, 10 nodes,

and they are all

43:46

allowed to communicate to each

other going up the transformer.

43:51

And then if you want to

implement cross-attention,

43:53

so you have a full

encoder-decoder transformer,

43:55

not just a decoder-only

transformer or a GPT.

43:59

Then we need to also add

cross-attention in the middle.

44:03

So here, there is a

self-attention piece where all

44:05

the--

44:06

there's a self-attention

piece, a cross-attention piece,

44:08

and this MLP.

44:09

And in the

cross-attention, we need

44:12

to take the features from

the top of the encoder.

44:14

We need to add one

more line here,

44:16

and this would be the

cross-attention instead of a--

44:20

I should have implemented

it instead of just pointing,

44:22

I think.

44:23

But there will be a

cross-attention line here.

44:25

So we'll have three

lines because we

44:26

need to add another block.

44:28

And the queries will

come from x but the keys

44:31

and the values will come

from the top of the encoder.

44:35

And there will be

basic code information

44:36

flowing from the

encoder, strictly

44:38

to all the nodes inside x.

44:41

And then that's it.

44:42

So it's a very

simple modifications

44:44

on the decoder attention.

44:47

So you'll hear people

talk that you have

44:49

a decoder-only model like GPT.

44:51

You can have an encoder-only

model like BERT,

44:53

or you can have an

encoder-decoder model

44:55

like say T5, doing things

like machine translation.

44:59

And in BERT, you can't train

it using this language modeling

45:04

setup that's utter

aggressive, and you're just

45:06

trying to predict next

[INAUDIBLE] in the sequence.

45:07

You're training it doing

slightly different objectives.

45:09

You're putting in

the full sentence,

45:12

and, the full sentence is

allowed to communicate fully.

45:14

And then you're trying to

classify sentiment or something

45:16

like that.

45:18

So you're not trying to model

the next token in the sequence.

45:21

So these are trained

slightly different

45:26

using masking and other

denoising techniques.

45:31

OK.

45:32

So that's like the transformer.

45:34

I'm going to continue.

45:36

So yeah, maybe more questions.

45:38

[INAUDIBLE]

46:01

This is like we are enforcing

these constraints on it

46:06

by just masking [INAUDIBLE]

46:12

So I'm not sure

if I fully follow.

46:14

So there's different ways

to look at this analogy,

46:16

but one analogy is

you can interpret

46:18

this graph as really fixed.

46:20

It's just that every time

we do the communicate,

46:22

we are using different weights.

46:23

You can look at it that way.

46:24

So if we have block size

of eight in my example,

46:26

we would have eight nodes.

46:27

Here we have 2, 4, 6.

46:29

OK, so we'd have eight nodes.

46:30

They would be connected in--

46:33

you lay them out, and you only

connect from left to right.

46:35

[INAUDIBLE]

46:42

Why would they

connect-- usually,

46:44

the connections don't change

as a function of the data

46:46

or something like that--

46:47

[INAUDIBLE]

47:00

I don't think I've seen

a single example where

47:02

the connectivity

changes dynamically

47:03

in the function data.

47:04

Usually, the

connectivity is fixed.

47:05

If you have an encoder,

and you're training a BERT,

47:07

you have how many

tokens you want,

47:09

and they are fully connected.

47:11

And if you have a

decoder-only model,

47:13

you have this triangular

thing, and if you

47:15

have encoder-decoder,

then you have

47:16

awkwardly two pools of nodes.

47:21

Yeah.

47:24

Go ahead.

47:25

[INAUDIBLE] I wonder, you

know much more about this

47:45

than I know.

47:46

But do you have a sense of

like if you ran [INAUDIBLE]

48:00

In my head, I'm thinking

[INAUDIBLE] but then you also

48:08

have different things for

one or more of [INAUDIBLE]----

48:13

Yeah, it's really

hard to say, so that's

48:15

why I think this paper is so

interesting because like, yeah,

48:17

usually, you'd

see like the path,

48:18

and maybe they had

path internally.

48:19

They just didn't publish it.

48:20

All you can see is things that

didn't look like a transformer.

48:23

I mean, you have ResNets,

which have lots of this.

48:26

But a ResNet would be

like this, but there's

48:29

no self-attention component.

48:31

But the MLP is there

kind of in a ResNet.

48:35

So a ResNet looks

very much like this

48:37

except there's no-- you can

use layer norms in ResNets,

48:40

I believe, as well.

48:41

Typically, sometimes,

they can be batch norms.

48:43

So it is kind of like a ResNet.

48:45

It is like they took

a ResNet, and they

48:47

put in a self-attention

block in addition

48:50

to the preexisting

MLP block, which

48:52

is kind of like convolutions.

48:53

And MLP was strictly

speaking deconvolution,

48:55

one by one convolution,

but I think

48:59

the idea is similar in that MLP

is just like a typical weights,

49:04

nonlinearity weights operation.

49:11

But I will say, yeah, this

is kind of interesting

49:13

because a lot of

work is not there,

49:15

and then they give

you this transformer.

49:17

And then it turns

out 5 years later,

49:18

it's not changed, even though

everyone's trying to change it.

49:20

So it's interesting to me

that it's like a package,

49:23

in like a package,

which I think is really

49:25

interesting historically.

49:26

And I also talked

to paper authors,

49:30

and they were

unaware of the impact

49:32

that the transformer

would have at the time.

49:33

So when you read this paper,

actually, it's unfortunate

49:37

because this is the paper

that changed everything,

49:39

but when people read it,

it's like question marks

49:41

because it reads like a pretty

random machine translation

49:45

paper.

49:46

It's like, oh, we're

doing machine translation.

49:47

Oh, here's a cool architecture.

49:48

OK, great, good results.

49:51

It doesn't know what's

going to happen.

49:53

[LAUGHS] And so when

people read it today,

49:56

I think they're

confused potentially.

50:00

I will have some

tweets at the end,

50:02

but I think I would

have renamed it

50:03

with the benefit of hindsight

of like, well, I'll get to it.

50:08

[INAUDIBLE]

50:20

Yeah, I think that's a

good question as well.

50:22

Currently, I mean,

I certainly don't

50:24

love the autoregressive

modeling approach.

50:27

I think it's kind of

weird to sample a token

50:29

and then commit to it.

50:31

So maybe there are

some ways, some hybrids

50:36

with the Fusion as

an example, which

50:38

I think would be

really cool, or we'll

50:41

find some other ways to edit

the sequences later but still

50:44

in our regressive framework.

50:47

But I think the Fusion is

like an up and coming modeling

50:49

approach that I personally

find much more appealing.

50:51

When I sample text, I don't

go chunk, chunk, chunk,

50:54

and commit.

50:55

I do a draft one, and then

I do a better draft two.

50:58

And that feels like

a diffusion process.

51:00

So that would be my hope.

51:05

OK, also a question.

51:07

So yeah, you'd think

the [INAUDIBLE]

51:20

And then once we

have the edge rates,

51:21

we just have to multiply

it by the values,

51:23

and then you just

[INAUDIBLE] it.

51:25

Yes, yeah, it's right.

51:27

And you think there's MLG

within graph neural networks

51:30

and they'll potentially--

51:32

I find the graph neural

networks like a confusing term

51:34

because, I mean,

yeah, previously,

51:38

there, was this notion of--

51:40

I feel like maybe today

everything is a graph neural

51:42

network because a transformer