Advertisement

1:02:52

1:02:52

Stanford CS231N Deep Learning for Computer Vision | Spring 2025 | Lecture 1: Introduction

Stanford Online

·

May 10, 2026

Open on YouTube

Transcript

0:05

This is CS231n.

0:07

And I'm Professor Fei-Fei

Li from computer science

0:11

department.

0:11

I will be co-teaching

this quarter

0:14

with Professor Ehsan Adeli

and my graduate student Zane.

0:19

So you'll meet them as well

as our wonderful TA team

0:23

that you will meet later.

0:24

So I just want to get started.

Advertisement

0:28

So this is what

excites me, that AI

0:32

has become such an

interdisciplinary field,

0:35

that what you're going

to learn in this class,

0:38

of course, is very technical.

0:40

It's about computer

vision and deep learning.

0:42

But I really do

hope that you take

0:44

it to whichever discipline you

work in and are passionate about

0:48

and apply it.

0:49

So we hear a lot

about the field of AI.

0:52

So how do we position

computer vision

Advertisement

0:56

and the scope of this class?

0:57

If you consider AI

as this big bubble,

1:02

computer vision is very

much an integral part of AI.

1:07

Some of you have heard me

saying that not only vision is

1:10

part of intelligence, it's a

cornerstone to intelligence.

1:13

Unlocking the mystery

of visual intelligence

1:16

is unlocking the

mystery of intelligence.

1:20

But one of the most important

tools, mathematical tools,

1:25

to solving AI is machine

learning or some people

1:29

call statistical

machine learning.

1:31

And this is exactly what

we will be talking about.

1:36

Within the field of

machine learning,

1:38

in the past 10 plus years, we

have seen a major revolution

1:42

called deep learning.

1:43

And I'll explain a little

bit of what deep learning is.

1:46

Deep learning is a set

of algorithmic techniques

1:50

that is built around

a family of algorithms

1:54

called neural networks.

1:55

And so if you ask me to pinpoint

the scope of this class,

2:02

we'll not be able to cover the

entirety of computer vision.

2:05

We'll not be able to cover

the entirety of machine

2:07

learning or deep learning.

2:09

But we're going to cover the

core intersection of these two

2:12

fields.

2:13

And of course, just

like the entirety of AI,

2:18

computer vision is

becoming more and more

2:20

an interdisciplinary field.

2:23

A lot of the techniques

we use as well as

2:26

the problems we

work with intersect

2:28

with many different other

fields, like natural language

2:31

processing, speech recognition,

robotics, and AI as a whole

2:37

is a field that intersects

with mathematics, neuroscience,

2:41

computer science,

psychology, physics, biology,

2:44

and many application

areas from medicine

2:46

to law to education

and business and so on.

2:49

So what you will get for this

lecture, our first lecture,

2:55

is I'll give a very

brief history of computer

2:58

vision and deep learning.

2:59

And then Professor Adeli will go

over the overview of this course

3:05

and lay the groundwork of

how this course is set up

3:08

and what our expectations are.

3:11

So the history of vision did

not begin when you were born

3:19

or humanity was born.

3:20

The history of vision began

540 million years ago.

3:25

You might ask, what happened

540 million years ago?

3:29

Why are we pinpointing a

relatively specific date or year

3:34

in evolution.

3:35

Well, it's because a

lot of fossil studies

3:37

have shown us that there is a

mystery period called Cambrian

3:43

explosion.

3:45

The fossil studies showed about

10 million years in evolution

3:49

during that time, which is

a very short period of time

3:52

for evolution.

3:53

We see the explosion

of animal species

3:58

in the fossil study, which means

before the Cambrian explosion,

4:02

life on Earth was pretty chill.

4:05

It was actually in the water.

4:06

There's no animals

on the land yet.

4:10

And animals just float around.

4:13

So what caused this explosion

in animal speciation?

4:18

There were many theories, from

climate to chemical composition

4:21

of the ocean water.

4:23

But one of the most compelling

theories was the onset of ice.

4:29

The first animal,

a trilobite, they

4:32

gained photosensitive cells.

4:34

So the eyes we

were talking about

4:37

were not sophisticated lenses

and retinas and nerve cells.

4:41

It was literally a

very simple pinhole.

4:44

And that pinhole

collected light.

4:47

Once you collected light,

life is completely different.

4:53

Without sensors,

life is metabolism.

4:57

It's very passive.

4:59

It is just metabolism.

5:01

And you come and go.

5:02

With sensors, you become an

integral part of the environment

5:06

that you might want to change.

5:08

You might want to

actually survive in it.

5:11

Some animals or plants

become your dinner.

5:16

And you become

someone else's dinner.

5:18

So evolutionary forces

drives intelligence

5:24

to evolve because of

the onset of sensors,

5:27

because of the onset of

vision, along with haptics

5:31

or tactile sensing.

5:33

Those are the oldest

sensors for animals.

5:38

So that entire course

of 540 million years

5:41

of evolution of vision is the

evolution of intelligence.

5:46

Vision as one of the

primary senses of animals

5:49

drove the development of

nervous system, the development

5:54

of intelligence.

5:55

Almost all animals on

Earth today we know of

5:59

have vision or use vision as

one of the primary senses.

6:03

Humans are especially

visual animals.

6:06

More than half of

our cortical cells

6:08

are involved in

visual processing.

6:11

And we have a very complex

and convoluted visual system.

6:15

So this is what excites me

to enter the field of vision.

6:19

And I hope it excites you.

6:21

So now, let's just fast

forward from Cambrian explosion

6:30

to actually human civilization.

6:33

Humans do innovate.

6:35

And not only we see.

6:37

We want to build

machines that see.

6:40

So here's a couple of

drawings by, of course,

6:44

Leonardo da Vinci, who

was just forever curious

6:48

about everything.

6:49

He studied camera obscura for

how to make steam machines.

6:56

In fact, even way before

him, in ancient Greece

7:01

and in ancient China,

we have seen documents

7:05

about thinkers,

philosophers thinking

7:09

about how to project

objects through pinholes

7:15

and to create images of objects.

7:19

And of course, in

our modern life,

7:22

cameras have truly exploded.

7:25

But cameras are not enough for

seeing, just like eyes are not

7:30

enough for seeing.

7:31

These are apparatus.

7:33

We need to understand how

visual intelligence happens.

7:35

And that's really the

crux of this course.

7:38

So let's just talk a little bit

of the history that brought us

7:45

to this intersection of deep

learning and computer vision.

7:49

So let me go back to the 1950s.

7:57

The 1950s-- a set of very

critically important experiments

8:03

happened in neuroscience.

8:05

And that was the study

of the visual pathways

8:08

of mammals, especially

the seminal work

8:10

by Hubel and Wiesel.

8:11

They used electrodes to put

into live cats anesthetized.

8:18

And then they studied

the receptive field

8:21

of neurons that are in

the primary visual cortex.

8:25

What they have learned,

to their surprise,

8:28

are two very important things.

8:31

One is that neurons that

are responsible for seeing

8:38

in the primary

visual cortex have

8:41

their own individual

receptive fields.

8:44

Receptive fields means

that for every neuron,

8:48

there is a part of

space it actually sees.

8:52

It's not all the space.

8:54

It's not very big.

8:55

It tends to be a very

confined patch of the space.

9:00

And within that space, it

sees specialized patterns,

9:06

simple patterns, when you're

measuring from the early part

9:12

of the visual pathway.

9:15

And by and large, in the

primary visual cortex,

9:18

which is around here in the back

of the head, not near your eyes,

9:23

it's oriented edges or

moving oriented edges.

9:27

So every neuron,

some neuron will

9:28

be seeing an edge like this.

9:30

Some will be seeing an

edge like this or this.

9:32

And that's how the computation

in the brain begins.

9:39

The second thing they learned

is that visual pathway

9:42

is hierarchical.

9:43

As you move beyond

the visual pathway,

9:47

the neurons feed

into other neurons.

9:50

And the neurons in

the higher layers

9:54

or deeper layers of

the visual hierarchy

9:57

have more complex

receptive fields.

9:59

So if you begin

with oriented edges,

10:04

you might feed into

a corner receptor.

10:06

You might feed into

an object receptor.

10:10

I'm overly simplifying.

10:12

But that's the concept, is that

neurons feed into each other.

10:16

And then they create this

big network of computation.

10:23

Of course, most of

you sitting here

10:25

are already thinking

the way I've

10:27

been describing this will

have a profound impact

10:30

on the neural network

modeling of visual algorithms.

10:36

Let's keep going.

10:37

That's year 1959.

10:40

It's very early

studies of seeing.

10:43

By the way, about

30 years later--

10:48

maybe not quite-- 20

something years later,

10:50

Hubel and Wiesel won the

Nobel Prize in medicine

10:54

for studying this,

uncovering the principles

10:59

of visual processing.

11:01

Another milestone in the early

history of computer vision

11:05

was the first PhD thesis

of computer vision.

11:09

Most people attribute

Larry Roberts in 1963

11:13

writing the first PhD

thesis just studying shape.

11:17

And this is a very, very

character representation

11:21

of the world.

11:22

And the idea is that, can

we take a shape like this

11:26

and understand that the surfaces

and the corners and features

11:30

of this shape?

11:32

It's intuitive that humans do.

11:34

So an entire PhD thesis

is devoted to this.

11:39

And that's the beginning

of computer vision.

11:44

And around that time, in

1966, an MIT professor

11:52

created a summer

project in MIT and asked

11:56

to hire a few undergrads, very

smart ones, to study vision.

12:03

And the goal was pretty

much solve computer vision

12:07

or solve vision for one summer.

12:09

Of course, just like the

rest of the history of AI,

12:13

we tend to be overoptimistic

of what we can

12:18

do in a short period of time.

12:20

So vision did not get

solved in that summer.

12:24

In fact, it has blossomed into

an incredible computer science

12:29

field.

12:30

If you go to our annual

conferences every year now,

12:33

it has more than 10,000

people attending.

12:36

But 1960s is where, between

Larry Roberts PhD thesis as well

12:43

as this kind of project, we

in our field considered that

12:48

the beginning of the

field of computer vision.

12:51

A seminal book was written

in the 1970s by David Marr,

12:55

who unfortunately

died too early.

12:58

He wanted to study vision

systematically and start

13:01

to consider how visual

processing happens.

13:05

Even though this

is not explicitly

13:07

stated, but there is

a lot of inspiration

13:10

from neuroscience and

cognitive science.

13:12

He was thinking about, if

you take an input image,

13:20

how do we visually process

and understand the image?

13:23

Maybe the first layer is more

like edges, just like we saw.

13:28

He calls it primal sketch.

13:30

And then there is a 2 and 1/2 D

sketch which separates different

13:37

depth of the objects

in the image.

13:42

So the ball is the

foreground object.

13:45

And then the grass here--

13:47

oh, no, not grass.

13:48

The ground here

is the background.

13:51

So he does these 2

and 1/2 D sketch.

13:53

And then, finally, David Marr

believes the grand holy grail

14:01

victory of solving vision is

to know the entire full 3D

14:06

representation.

14:07

And that is actually the

hardest thing of vision.

14:12

Let me digress for 20 seconds.

14:15

Because if you think about

vision for all animals,

14:20

it's an ill posed problem.

14:23

Since the early trilobites

who collected light

14:27

from underwater, light--

14:30

the world through photons

is projected on something

14:35

on a surface more or less 2D.

14:38

At that time, it was just,

I don't know, some patch

14:40

in the animal.

14:42

But right now, for

us, it's a retina.

14:45

But the actual world is 3D.

14:47

So recovering 3D information,

the entire 3D world,

14:55

from 2D images is the

fundamental problem nature had

15:00

to solve and computer

vision has to solve.

15:02

And mathematically, that's

an ill-posed problem.

15:05

So what did we later do?

15:07

Anybody have a wild guess?

15:14

[INAUDIBLE]

15:17

Yes.

15:18

The trick that nature did is

develop multiple eyes, mostly

15:22

two.

15:22

Some animals have more than two.

15:25

And then you

triangulate information.

15:28

But two eyes are not enough.

15:29

You actually have to understand

correspondences and all that.

15:33

We'll touch on some

of these topics.

15:35

But there are other computer

vision classes taht Stanford

15:38

offers that also specifically

talk about 3D vision.

15:42

But the point is it's

a very hard problem.

15:45

And we have to solve it.

15:47

Nature has solved it.

15:48

Humans have solved it but

not to extreme precision.

15:53

In fact, humans are

not that precise.

15:55

I roughly know the 3D shapes.

15:58

But I don't have geometric

precision of all the shapes.

16:03

So that's one thing to

consider and appreciate

16:06

how hard this problem is.

16:08

Another thing that is very

different for computer vision

16:12

and language is

actually something

16:15

philosophically subtle.

16:17

Language doesn't

exist in nature.

16:20

You cannot point to something

and say there is language.

16:24

Language is a purely

generated thing.

16:30

I don't even know

what word to use.

16:31

It comes through our brain.

16:35

It's generated.

16:37

It's 1D.

16:38

It's sequential.

16:40

So this actually has profound

implications in the latest

16:44

wave of GenAI algorithms.

16:47

This is why these

LLMs, which is outside

16:50

of the scope of this class,

is so powerful because we

16:54

can model language that way.

16:56

But vision is not generated.

16:58

There is actually

a physical world

17:01

out there respecting the laws

of physics and materials and all

17:05

that.

17:06

So vision has very

different tasks.

17:09

So I just want you to appreciate

the difference between language

17:14

and vision and actually,

frankly, appreciate nature,

17:17

how it solved this problem.

17:19

Let's keep going.

17:21

1970s, the early pioneers of

computer vision, without data,

17:28

without really much

of powerful computers,

17:32

without the mathematical

advances we have seen today,

17:36

are already beginning to solve

some of the harder problems

17:40

of computer vision-- for

example, recognition of objects.

17:43

Here in Stanford, one

of the pioneering work

17:48

is called generalized cylinders

by Rodney Brooks and Tom

17:52

Binford.

17:52

And ironically, Rodney Brooks

today is on campus, actually,

17:58

over there giving a talk

at the robotics conference.

18:03

And he went on to become

one of the greatest

18:05

roboticists of our time

and was founder of Roomba

18:10

and many other robots.

18:11

And then not very far from us

in another part of Palo Alto,

18:16

researchers have worked on

these also compositional models

18:24

of human body and objects.

18:27

And then in the 1980s, digital

photos start to appear.

18:34

At least photos start to appear.

18:37

And people can digitize

that a little bit.

18:39

And then there are some

great work in edge detection.

18:43

You look at all this

and probably feel

18:48

a sense of disappointment.

18:50

I mean, it's kind of trivial

to get some sketches and edges.

18:55

And it's not really

going anywhere.

18:58

That's how computer

vision, works at that time.

19:02

And in fact, you're

not so wrong.

19:03

That was around the

time before many of you

19:07

were born that we

entered AI winter.

19:10

The field entered AI winter

because the enthusiasm

19:15

and, hence, funding for AI

research has really dwindled.

19:18

A lot of things didn't deliver.

19:20

Computer vision didn't deliver.

19:22

Expert systems didn't deliver.

19:24

Robotics didn't deliver.

19:26

But under the hood of this

winter, a lot of research

19:32

start to grow from

different fields,

19:34

like computer vision,

NLP, robotics.

19:37

So let's also look at

another strand of research

19:40

that had a profound

implication in computer vision,

19:43

is that cognitive

and neuroscience

19:45

continue to blossom.

19:46

And what is really

important, especially

19:49

for the field of computer

vision, is cognitive

19:52

and neuroscience is starting

to point to as the North Star

19:55

problems we should work on.

19:57

For example,

psychologists have told us

20:00

there's something special

about seeing nature,

20:02

seeing real world.

20:06

This is a study by

Irv Biederman, who

20:09

shows that the detection

of bicycles on two images

20:13

differ depending on if the

images are scrambled or not.

20:18

Think about it.

20:19

From a phton point of

view, these two bicycles

20:22

land in the same

location on your retina.

20:26

But somehow the

rest of the image

20:28

impacts the viewer,

seeing the target objects.

20:39

So there is something

telling us that seeing

20:41

the entire forest

or the entire world

20:44

impacts the way we see objects.

20:46

It also tells us visual

processing is very fast.

20:49

Here's another direct measure

of how fast we detect objects.

20:55

This is an early 1970s

experiment showing people

21:00

a video.

21:03

And the test for the subject

is to detect the human

21:07

in one of the frames.

21:09

I suppose every one of you

have seen that human in one

21:11

of the frames.

21:13

But think about how

remarkable your eyes are

21:15

or your brain is because

you've never seen this video.

21:19

I didn't tell you which frame

that the target object would

21:22

appear.

21:23

I did not tell you

what the target

21:24

object will look like, where it

is, its gestures, and all that.

21:28

Yet, you have no problem

detecting the humans.

21:34

And on top of that,

these frames are

21:37

played at 10 Hertz,

which means you're

21:39

seeing every frame for

only 100 milliseconds.

21:43

And this is how remarkable

our visual system is.

21:47

In fact, Simon Thorpe, another

cognitive neuroscientist,

21:53

have measured the speed.

21:55

If you hook people

up in EEG caps

21:58

and show them complex

natural scenes

22:01

and ask human subjects

to categorize things

22:05

from animals without--

22:07

versus things without animals--

22:10

hundreds of them.

22:11

And then you measure

the brain wave.

22:13

It turned out, after 150

milliseconds of seeing a photo,

22:18

your brain already has

a differential signal

22:22

that categorizes.

22:24

You might not be so impressed.

22:25

Because compared to today's

GPUs and modern chips,

22:29

150 milliseconds is really

orders of magnitude slower.

22:34

But you got to admire.

22:37

Our wetware, our

brain, our neurons

22:40

don't work as fast

as transistors.

22:43

150 milliseconds is

actually really fast.

22:46

It's only a few

hops in the brain

22:49

in terms of neural processing.

22:51

So yet, again,

this is telling us

22:53

humans are really good at seeing

objects and categorizing them.

22:59

In fact, not only we're

so good at seeing objects

23:02

and categorizing them, we

even develop specialized brain

23:05

areas that have expert

ability in recognizing

23:10

faces or places or body parts.

23:13

And these are discoveries by MIT

neurophysiologist in the 1990s

23:19

and early 21st century.

23:21

So all these studies tell

us, well, we should not just

23:26

be studying these kind

of character shapes

23:30

or the sketches of images.

23:33

We really should go after

important fundamental problems

23:38

that drives visual intelligence.

23:40

And one of those

problems that everything

23:43

has been telling us is

object recognition--

23:46

is object recognition

in natural setting.

23:49

There is a lot of objects

out there in the world.

23:52

And studying this

is going to be part

23:57

of the unlocking of

visual intelligence.

24:00

And that's what we did.

24:01

As a field, we

started by looking

24:04

at how we can separate

foreground objects

24:08

from background objects.

24:09

This is called recognition

by grouping in the 1990s.

24:14

Keep in mind, we're

still in AI winter.

24:16

But research is actually

happening and progressing.

24:20

And then there is

studies of features.

24:24

And some of you

might still remember

24:27

sift features and matching.

24:29

And when I enter grad school,

the most exciting thing

24:33

was face detection.

24:34

I remembered that first

year in my grad school,

24:37

this paper was published.

24:39

And five years later,

the first digital camera

24:42

used this paper's algorithm and

delivered automatic face focus

24:49

because of face detection.

24:51

So things started to work

and taken into industry.

24:56

And then around the

early 21st century,

25:01

a very important thing

started to happen,

25:04

is internet started to happen.

25:06

When internet started to happen,

data started to proliferate.

25:12

And the combination of

digital cameras and internet

25:16

started to give the

field of computer vision

25:19

some data to work with.

25:22

So in that early days, we're

working with thousands of images

25:26

or tens of thousands of images

to study the visual recognition

25:30

problem or the object

recognition problem.

25:32

So you've got data sets

like Pascal Visual Object

25:36

Challenge or Caltech 101.

25:40

I'm going to pause here.

25:43

And this is where the first

thread of computer vision

25:50

start to progress.

25:51

And you might be wondering,

why is she pausing?

25:54

Because I'm going to come back

and talk about deep learning.

25:57

So while this field of

vision was progressing

26:03

through neurophysiology

to computer vision,

26:06

to cognitive neuroscience,

to computer vision again,

26:11

a separate effort is

going on in parallel.

26:14

And that eventually

became deep learning.

26:17

It started from these early

studies of neural network,

26:22

things like perceptron.

26:24

And people like Rumelhart

started to work.

26:29

And of course, Jeff

Hinton in his early days,

26:32

started to work with a small

number of artificial neurons

26:35

and look at how that can

process information and learn.

26:41

And you've heard people like the

great minds like Marvin Minsky

26:48

and his colleagues working

on different aspects

26:52

of this perception.

26:54

But Marvin Minsky did say that

perceptrons cannot learn these

27:02

XOR logic functions.

27:05

And that caused a little bit

of a setback in neural network.

27:10

Well, things continued to

progress despite the setback.

27:14

And one of the most important

work before the first inflection

27:21

point is this neocognitron

work by Fukushima in Japan.

27:25

Fukushima hand-designed a neural

network that looks like this.

27:31

So it has about

five or six layers.

27:35

And then he kind of designed

the different functions

27:41

across the layers,

which you will

27:43

learn more, that

more or less was

27:46

inspired by the visual

pathway that I was describing.

27:50

Remember the cat experiment

from simple receptive field

27:54

to more complicated

receptive field.

27:56

And he was doing that here.

27:59

The early layers have

simple functions.

28:01

And then the later

lighter layers

28:03

have more complex functions.

28:05

And the simple ones can

call it convolution.

28:08

Or he uses the

convolution function.

28:10

And the more complex one, he

was pulling the information

28:13

from the convolution layers.

28:15

So neocognitron was

really an engineering feat

28:19

because every parameter

was hand-designed.

28:24

There are hundreds

of parameters.

28:26

He has to just meticulously

put them together

28:29

so that this small

neural network can

28:32

recognize digits or letters.

28:35

So the real breakthrough

came around that time in 1986

28:41

is a learning rule.

28:43

That learning rule is

called backpropagation.

28:45

It's going to be one

of our first classes

28:47

to show you that

Rumelhart, Jeff Hinton--

28:52

they took neural

network architecture

28:58

and introduced an error

correcting objective function

29:04

so that if you put in

some input and know

29:07

what the correct

output is, how do you

29:10

take the difference between

what the neural network outputs

29:14

versus the actual

correct answer and then

29:17

propagate the information

back so that you

29:22

can improve the parameters

along the neural network?

29:28

And that propagation

from the output

29:31

back to the entire

neural network

29:33

is called backpropagation.

29:35

It follows some of these

basic calculus chain rules.

29:39

And that was a watershed moment

for neural network algorithm.

29:47

And of course, we're still smack

in the middle of AI winter.

29:50

All these work was happening

without public fanfare.

29:54

But of course, in the

world of research,

29:57

these are very

important milestones.

29:59

One of the most earliest

applications of this neural

30:03

network with backpropagation

is Yann LeCun's convolutional

30:07

neural network, made in the

1990s when he was working

30:10

in the Bell Labs.

30:11

And what he did is just created

a slightly bigger network,

30:15

about seven layers-ish,

and made it good enough

30:20

with great engineering

capability to recognize letters.

30:25

And it was actually shipped

to some part of the US Postal

30:28

Offices and banks to

read digits and letters.

30:33

So that was an application

of early neural network.

30:37

And then Jeff Hinton

and Yann LeCun

30:41

continued to work

on neural network.

30:43

It didn't go very far.

30:45

Because despite these

improvements and tweaks

30:52

of these neural network, things

more or less just stalled.

30:57

They collected a big data

set of digits and letters.

31:00

And digits and letters

kind of was quasi soft

31:03

in terms of recognition.

31:05

But if you put the

system through the kind

31:08

of digital photos that the

neuroscientists were using

31:11

to recognize cats and dogs

and microwaves and chairs

31:14

and flowers, it

just didn't work.

31:17

And a huge part of this

problem is the lack of data.

31:22

And lack of data is not

just an inconvenience.

31:27

It's actually a

mathematical problem

31:29

because these algorithms are

high capacity algorithms that

31:36

actually needs to be

driven by lots of data

31:39

in order to learn to generalize.

31:42

And there is some deep

mathematical principles

31:45

behind these rules of

generalization and model

31:48

overfitting.

31:49

And data was

underappreciated, was

31:52

underlooked because

most people are just

31:54

looking at these architectures.

31:56

They did not

realize that data is

31:59

part of the first class

citizen for machine

32:02

learning and deep learning.

32:03

So this is part of the work

that my students and I did

32:08

in the early 2000s, that we

recognize this importance

32:14

of data.

32:15

We hypothesized that the

whole field was actually

32:21

missing this-- underappreciating

the importance of data.

32:24

So we went about and

collected a huge data

32:27

set called ImageNet that

has 50 million images

32:30

after cleaning a billion images.

32:32

And these 15 million images were

sorted across 22,000 categories

32:38

of objects.

32:39

We actually studied a lot of

the cognitive and psychology

32:43

literature to appreciate

that 22,000 images were--

32:51

sorry, 22,000 categories

were roughly in the order

32:54

of the number of categories

that humans learned to recognize

32:58

in the early years

of their life.

33:00

And then we open

sourced this data

33:02

set and created an ImageNet

challenge called the Large Scale

33:05

Visual Recognition Challenge.

33:07

We curated a subset of ImageNet

of a million images or a million

33:12

plus images and 1,000

object classes and then ran

33:16

an international object

recognition challenge for many

33:21

years.

33:22

And the goal is that we ask

researchers to participate.

33:26

And their goal is to

create algorithms.

33:29

It doesn't matter which

kind of algorithms.

33:31

And they will test you on your

algorithm's ability to recognize

33:35

photos and see if you can call

out these 1,000 object classes

33:40

as correctly as possible.

33:42

And here are the errors.

33:45

First year we run

this competition,

33:53

the best performing algorithms

error was nearly 30%.

33:57

And it's really pretty abysmal

because humans can perform

34:00

under like, say, 3% error.

34:03

And then 2011, it

wasn't that exciting.

34:07

But something happened in 2012.

34:09

That was the most exciting year.

34:12

That year, Jeff Hinton

and his students

34:16

participated in

this challenge using

34:18

convolutional neural network.

34:20

And they reduced the

error almost by half.

34:23

And it truly showed the power

of deep learning algorithms.

34:29

And so the participating

algorithm in 2012 ImageNet

34:34

challenge was called AlexNet.

34:36

And the funny thing is,

if you look at AlexNet,

34:42

it's not that different from

Fukushima's neocognitron

34:47

32 years ago.

34:49

But two major things

happened between these two.

34:54

One is that

backpropagation happened.

34:57

It's a principled,

mathematically rigorous learning

35:01

rule so that you don't

have to ever use hand

35:04

to tune parameters.

35:06

And that was a major

breakthrough theoretically.

35:09

Another breakthrough was data.

35:14

The recognition of data and the

understanding of data driving

35:19

these high capacity models,

which eventually will have

35:23

trillion parameters-- but

at that time was millions

35:26

of parameters-- was critical for

setting off the deep learning

35:34

for this to work.

35:36

And really, many people

consider the year of 2012

35:42

and the AlexNet algorithm

that won the ImageNet

35:46

the challenge the historical

moment of the birth

35:51

or rebirth of modern AI or

the birth of deep learning

35:54

revolution.

35:55

And of course, the reason

many of you are here

35:59

is since then, we are in the

era of deep learning explosion.

36:04

If you look at computer vision,

some main annual research

36:10

conference, called CVPR--

36:13

the number of papers

have exploded.

36:15

And our arXiv

paper has exploded.

36:18

And many new

algorithms since then

36:22

have been invented to

participate in the ImageNet

36:27

challenge.

36:28

In the following

years, we're going

36:29

to study some of

these algorithms.

36:31

But the point is

that some of these

36:34

algorithms beyond Alex that

have had a profound impact

36:39

in the progress of the

field of computer vision

36:43

and into the applications

of computer vision.

36:49

So a lot of things

have happened.

36:52

We're going to

cover some of these.

36:54

Not only the field

of computer vision

36:57

made a major progress

in creating algorithms

37:01

to recognize everyday m like

cats and dogs and chairs--

37:06

we also quickly, right

after ImageNet challenge,

37:10

the 2012 moment,

we've got algorithms

37:14

that can recognize much

more complicated images,

37:22

can retrieve images, or can

do multiple object detections,

37:27

can do image segmentation.

37:30

These are all different

tasks in visual recognition

37:34

that you'll find

yourself getting

37:36

familiar with

throughout this course

37:38

because vision is not just

calling out cats and dogs.

37:42

There is so much in the nuanced

ability of visual recognition.

37:48

And of course, vision is

not just static images.

37:52

So there are work in video

classification, human activity

37:57

recognition.

37:58

I'm showing you this overview.

38:00

You will learn some of these.

38:04

You don't have to understand

exactly what's going on here.

38:08

But I want you to appreciate

the variety of vision tasks.

38:14

Medical imaging, those of you

who come from a medical field,

38:20

whether it's radiology

or pathology or even

38:24

other aspects of medicine,

is deeply visual.

38:28

And this has a profound impact.

38:31

Scientific discovery--

even the seminal picture

38:37

you probably remember of the

first photography of black hole

38:41

uses a lot of computer vision

and computational photography

38:46

techniques.

38:47

Of course, applications in

sustainability and environment

38:52

is $also computer vision

contributed a lot of that.

38:58

And we also have made

a lot of progress

39:02

in image captioning right after

the image-- that 2012 moment.

39:07

This is actually work by

Andrej Karpathy, where he was

39:09

my student, his thesis work.

39:13

Then we also worked on

relationship understanding.

39:19

So not only visual

intelligence is

39:22

about seeing what's

on the pixel,

39:24

you can also see

what's beyond pixels,

39:26

including relationships of

objects and also style transfer.

39:33

A Lot of this work,

you will-- actually,

39:35

Justin Johnson, who will come

to guest lecture this course,

39:39

will tell you all about his

seminal work in style transfer.

39:45

And of course, in

generative AI eras,

39:48

we get these really incredible

results like face generation.

39:53

And this is the very early days

of image generation of Dall-E. I

39:59

think this is the early Dall-E.

Of course, now, Midjourney

40:03

and everything has gone beyond

these avocado and peach chairs.

40:08

But really, we are squarely in

the most exciting modern era

40:14

of AI explosion.

40:20

The three converging forces

of computation, algorithms,

40:25

and data have taken

this field just

40:29

to a whole different

level, where we're now

40:32

totally out of AI winter.

40:36

I would say we're in an

AI global warming period.

40:40

And I don't see any

of this slowing down

40:46

for both good and bad reasons.

40:48

And also, just a word, because

we are in the Silicon Valley,

40:53

we're in the very building

of Huang building and NVIDIA

40:58

lecture hall-- so we cannot

ignore also the progress

41:02

of hardware and

what that played.

41:05

So here is just the FLOP per

dollar graph for NVIDIA's GPUs.

41:14

And before 2020, the

progress was steady.

41:19

But as soon as deep

learning started

41:22

to drive these

GPUs and chips, you

41:27

can just see the GFLOPS have

just completely taken off.

41:33

And by any measure, we are

in this accelerated curve

41:40

of lots of compute as

well as lots of AI.

41:45

And these are just

different graphs

41:47

showing you conference

attendees, startups,

41:50

and enterprise applications

in AI all across

41:54

not just computer vision.

41:55

But also, NLP and others

have just exploded.

42:02

So quickly, last but not the

least, it's been exciting.

42:06

There has been a

lot of successes.

42:08

But there is still a lot to

be done in computer vision.

42:11

So this problem is still

not totally solved.

42:14

And with great tools comes with

great consequences as well.

42:19

So computer vision

can do a lot of good.

42:24

But it also can do harm.

42:26

For example, human bias--

42:28

every single AI algorithm

today, the large ones,

42:32

are driven by data.

42:33

And data is an artifact

of human activities

42:38

on Earth and in history.

42:40

And a lot of the

data carry our bias.

42:43

And this gets carried

in AI systems.

42:47

We have seen a lot of face

recognition algorithms having

42:50

the same kind of bias

that humans have.

42:52

And we do have to

really recognize that.

42:55

We can also use AI to impact

human lives, some for the good.

43:01

Think about medical imaging.

43:02

But some are questionable.

43:05

What if AI is solely

behind deciding your job

43:09

or deciding your

financial loans?

43:11

So again, is it totally bad?

43:15

Is it totally good?

43:17

These are very

complicated issues.

43:19

This is also why I always get so

excited when students from HMS

43:23

or law school or education

school or business school

43:26

attend my class

because not all AI

43:29

issues are engineering issues.

43:31

We have a lot of human factors

and societal issues to solve.

43:36

I'm also particularly excited

by AI's medicine and health care

43:40

use.

43:41

This is something

really dear to my heart.

43:43

Professor Adeli

and Zane, who are

43:46

also co-instructors of

this course, we three of us

43:49

work on AI for aging

population as well as

43:53

patients and to try to use

computer vision to deliver care

43:59

to people.

44:00

So this is a good use.

44:01

And also, even in

terms of technology,

44:04

human vision is remarkable.

44:07

I want you to come out

of not only today's class

44:10

but also this entire

course to appreciate,

44:14

despite how much

computer vision can do,

44:16

there's just so much more

nuance, subtlety, richness,

44:22

complexity, and also

emotion in human vision.

44:26

Look at these kids

studying whatever

44:29

that their curiosity lead them

or the humor in this image.

44:33

There's still a lot more that

computer vision cannot do.

44:36

So I hope that

continue to entice

44:38

you to study computer vision.

44:40

At this point, I'm going to give

the podium to Professor Adeli

44:45

to go over the

rest of the class.

44:48

Thank you.

44:49

[APPLAUSE]

44:50

Awesome.

44:51

Thank you, Fei-Fei.

44:55

Great to start of the quarter.

44:57

And I hope my microphone

is working right now.

45:00

OK, good.

45:01

I'm seeing some

nodding of heads.

45:05

So very excited to

be here with you all.

45:13

And I'm hoping that

you will have a fun

45:18

and challenging course with an

amazing list of core instructors

45:23

that we have and great TAs.

45:26

So in this class, we

are going to cover

45:31

a wide variety of topics

around computer vision and use

45:34

of deep learning in

this space, categorized

45:37

into four different topics.

45:41

We will start with

deep learning basics.

45:45

And let's start actually

with a simple question of,

45:48

what is computer vision really?

45:52

So at its core, it's

about enabling machines

45:57

to see and understand images.

46:00

And basically, this is the most

fundamental task in this space--

46:09

in this space is

image classification.

46:13

You give the model an

image, say, of a cat.

46:17

And the model should

output a label cat.

46:21

And that's it.

46:23

But this deceptively simple

task is the foundation

46:29

for much of more

complex applications,

46:32

from self-driving to

medical diagnosis and so on.

46:36

So how do we teach a

machine to do this?

46:40

One of the simplest approaches

is to use linear classification,

46:44

as you can see in this slide.

46:48

So imagine each of the

images in our data set

46:53

is shown with a

dot in that space.

46:57

And each axis shows

some sort of feature

47:02

which was driven from

the image itself.

47:05

Here, we are showing a

2D space for simplicity.

47:09

But the task of a

linear classifier

47:12

is to find the hyperplane

or the linear function

47:17

that separates these

two, say, cats from dogs.

47:23

But we all know that

these linear models often

47:26

go just only so far.

47:29

They struggle when the data

isn't cleanly separable

47:32

with a straight line.

47:33

So the question is, what's next?

47:36

We'll get into the topics of how

to model more complex patterns.

47:44

And if we do so, we

often face challenges

47:49

of overfitting and

underfitting, which

47:54

are the topics we will cover in

the early lectures of the class.

47:59

And to strike the

right balance, we

48:05

use techniques

like regularization

48:08

to control model complexity and

optimization to find the best

48:14

fit parameters.

48:16

So these are the nuts and bolts

of deep learning and creating

48:21

these models, training models,

that not only fits the data

48:26

but also generalizes to

unseen and new data as well.

48:31

And now comes the fun part--

48:33

neural networks.

48:34

We've been talking

about them quite a lot.

48:38

And what neural networks do,

unlike the linear classifiers,

48:43

they stack multiple

layers of operations

48:47

to model non-linear

functions to be

48:54

able to either classify, to

solve the same problem of image

48:59

classification, and so on.

49:04

These are the models powering

everything from Google Photos.

49:09

And now, everybody's familiar

with ChatGPT, ChatGPT's vision

49:13

models, and so on.

49:15

In this course, we will go deep

into the details of how they

49:24

work, how they are trained.

49:26

And we will be looking into

debugging and improving them.

49:31

After looking at the

deep learning basics,

49:35

we will cover the topics of

perceiving and understanding

49:39

the visual world, which

is a complex process that

49:44

involves interpreting a vast

array of visual information.

49:49

And to do so, we

often first define

49:52

tasks that refer to specific

challenges or problems.

49:56

We aim to solve--

49:59

some of the examples are object

detection, scene understanding,

50:02

motion detection, and so on.

50:03

And to solve these tasks, we

use different models, which

50:10

are computational

and theoretical

50:13

frameworks we develop

to mimic or explain

50:17

how our visual system

accomplishes these tasks.

50:22

One of the examples of

these types of models

50:25

is neural networks.

50:30

So by aligning

models with tasks,

50:36

we can create systems

that can see and interpret

50:41

the world around us.

50:43

Speaking of tasks, let's

go back to the topic

50:48

of image classification,

predicting a single label

50:53

for an entire image.

50:56

But we know that real

world computer vision

50:59

is much richer than this.

51:02

And let's walk through

some of the tasks that

51:05

go beyond classification.

51:06

First, semantic segmentation,

where we are not just

51:13

labeling the object

or the entire image

51:17

as cat or tree or whatever.

51:19

Here, we are looking for

labels for every single pixel

51:25

in the image.

51:25

So every pixel is a

grass, cat, tree, or sky.

51:30

But we don't distinguish

between individual objects.

51:34

And next, we have

object detection,

51:38

where we now want to not

only say what is in the image

51:45

but also pinpoint the location.

51:47

And that's why we

create bounding boxes

51:49

around the objects and associate

them with specific labels.

51:54

And finally, we have

instance segmentation.

51:58

We'll go into instance

segmentation, which is

52:01

the most granular of them all.

52:04

It combines the ideas of

detection and segmentation

52:08

together.

52:09

And every object instance

gets its own mask.

52:13

So these tasks require much

deeper special understanding

52:20

and images.

52:21

And they push the models

to do more than just

52:23

recognizing categories.

52:27

The complexity doesn't

stop with static images.

52:30

Let's look at some

temporal dimensions.

52:33

So there's the task of

video classification,

52:36

as Fei-Fei talked about,

where we want to understand

52:40

what's happening in video.

52:42

Is there someone running,

jumping, or dancing?

52:47

There is the topic of

multimodal video understanding,

52:51

which is combining vision and

sound and other modalities.

52:56

For example, in this

example, the person

53:00

is playing a vibraphone

to really understand

53:04

what's happening here.

53:05

We have to create a

blend of visual features

53:08

and audio features to be able

to understand what's happening.

53:11

And finally, there is the

topic of visualization

53:14

and understanding that we will

be covering in this class, where

53:19

we want to interpret what's

being learned by the models

53:24

and see an attention frame

or attention map of what

53:31

the model is attending to to

do a correct classification

53:35

and so on.

53:36

And then we have

models beyond tasks.

53:39

We look into models.

53:41

And the very first topic--

let me introduce to you--

53:46

that we'll be covering is

Convolutional Neural Networks

53:50

or CNNs.

53:51

There are a number

of operations.

53:52

We will be going

over the details

53:55

in the class, starting from an

image, a number of convolutions,

53:59

sampling and fully

connected operations,

54:01

and, finally,

creating the output.

54:05

And beyond convolutional

neural networks,

54:08

we will study recurrent neural

networks for sequential data

54:14

and even neural architectures,

such as transformers

54:19

and attention-based frameworks.

54:24

So next, we will be covering

some large-scale distributed

54:29

training topics, which is

kind of new this quarter.

54:34

I'm sure you've all heard

about large language models,

54:38

large vision models, and so on.

54:40

And we will be

briefly discussing

54:44

how these models are

actually trained.

54:47

We know that data and data

sets are expanding models.

54:51

And models are becoming

larger and larger.

54:56

And in order to

train such models,

54:59

there are some strategies--

55:02

for example, data

parallelization,

55:04

model parallelization-- that

we will cover in this class.

55:07

But beyond that, there

will be so many challenges,

55:11

such as synchronization between

these models and workers

55:15

and so on, as well as

several other aspects

55:20

that we'll be covering in one

of the lectures this quarter.

55:25

And we will go also over some

of the trends for training

55:31

these large models.

55:33

After completing this

topic, what we will do

55:36

next is looking into generative

and interactive visual

55:44

intelligence, where

we will first start

55:48

with self-supervised learning.

55:52

Self-supervised learning is

a branch of machine learning

55:55

in which models learn to

understand and represent data

56:00

by getting some training

signals from the data itself.

56:04

We will cover this topic.

56:06

It's one of the approaches

that has enabled training

56:10

of large scale models using

vast amounts of data that do not

56:15

require labels, unlabeled data.

56:18

And they have played a key

role in recent breakthroughs

56:23

in computer vision in general.

56:26

And we will talk a little

bit about generative models.

56:30

They go beyond recognition.

56:33

They actually generate.

56:35

This is an example of the

content of a Stanford campus

56:39

photo, which is reimagined in

the style of Van Gogh's Starry

56:44

Night.

56:45

This is known as style

transfer, a classic application

56:49

of neural generative techniques.

56:54

Generative models can

now translate language

56:58

into images given a prompt.

57:03

A model like Dall-E, Dall-E

2 generates an entirely novel

57:07

image.

57:09

This showcases how

generative vision models

57:12

blend understanding,

creativity, and control

57:16

in their generations.

57:19

And you've probably

heard recently

57:22

about the topic of

diffusion models in general.

57:26

That's another thing that we'll

be covering in this quarter.

57:33

They basically learn to

reverse a gradual noising

57:37

process to generate images.

57:40

And interestingly,

in assignment 3,

57:43

you will actually be

implementing a generative model

57:46

that generates emojis

from text inputs,

57:53

from prompts-- for example, a

face with a cowboy hat, which

57:57

is denoised from pure noise.

58:01

Vision language models are

the next topic of interest

58:06

we will be covering.

58:08

They connect text and images in

a shared representation space.

58:16

And given a caption

or image, the model

58:19

retrieves or generates

its corresponding pair,

58:24

as you can see.

58:25

So there are a lot of

advances in this area.

58:29

We'll be covering some

of the key examples.

58:32

Again, this is a key task

for cross-modal retrieval

58:37

or understanding and visual

question answering and so on.

58:41

So we'll get to

that in the class 2.

58:44

Moving beyond 2D, models can

now reconstruct and generate 3D

58:52

representations from images.

58:55

And here, you can see some

voxel-based reconstructions,

59:00

shape completion, and even 3D

object detection from single

59:06

view images.

59:09

So 3D vision enables

more especially grounded

59:14

understanding, which is

crucial for robotics and AI VR

59:19

applications.

59:20

And finally, vision

empowers embodied agents

59:26

that act in the physical world.

59:30

So these models often

must perceive, plan,

59:35

and execute whether it's

cleaning up a messy room

59:41

or generalizing from

human demonstrations.

59:44

So with all of these, we will

be covering different topics

59:50

around generative and

interactive visual intelligence.

59:53

And finally, we will cover some

human-centered applications

1:00:00

and implications, as Fei-Fei

very nicely explained.

1:00:05

So there is a computer vision.

1:00:08

And generally, AI

have been having a lot

1:00:12

of impact in the past years.

1:00:16

And it's very

important to understand

1:00:18

the human-centered

aspects and applications.

1:00:21

And some of these

impacts are reflected

1:00:24

by these awards that are going

to researchers in this space.

1:00:32

It was first recognized by

the Turing Award 2018, which

1:00:38

is the most prestigious

technical award given

1:00:41

to major contributions

of lasting importance

1:00:45

for computing.

1:00:47

Geoffrey Hinton, Yoshua

Bengio, and Yann LeCun

1:00:50

received the award for

conceptual and engineering

1:00:54

during breakthroughs

that have made

1:00:57

deep neural networks a critical

component of computing.

1:01:01

Beyond that, last year,

in 2024, Geoffrey Hinton

1:01:06

was jointly awarded the

Nobel Prize in physics

1:01:11

alongside John Hopfield for

their foundational contributions

1:01:14

to neural networks.

1:01:17

And finally, I want to very

briefly mention the learning

1:01:21

objectives for this class will

be formalizing computer vision

1:01:27

applications into tasks.

1:01:30

As you can see some

of the details here,

1:01:33

we want to develop and

train vision models, models

1:01:38

that operate on images

and visual data--

1:01:41

images, videos, and so on--

1:01:43

gain an understanding

of where the field is

1:01:46

and where it is headed.

1:01:48

That's why we have some new

topics also covered specifically

1:01:53

in this year.

1:01:56

So the four topics that

I mentioned earlier,

1:02:01

we will be going over the basics

in the very first few weeks.

1:02:06

Bear with us because these

are important topics.

1:02:09

And you need to understand

the details first,

1:02:12

how to build the

models from scratch.

1:02:15

And then we'll get to more

interesting, exciting topics

1:02:19

of the day--

1:02:20

computer vision.

1:02:21

And finally, we'll have one big

lecture on human-centered AI

1:02:27

and computer vision.

1:02:30

I want to just leave

you with what we

1:02:33

will be covering next session.

1:02:34

That's going to be

image classification

1:02:38

and linear classifiers,

which will get us started

1:02:43

with the world of CS231n.

1:02:45

Thank you.

— end of transcript —

Advertisement

More from Stanford Online

1:11:40

1:11:40

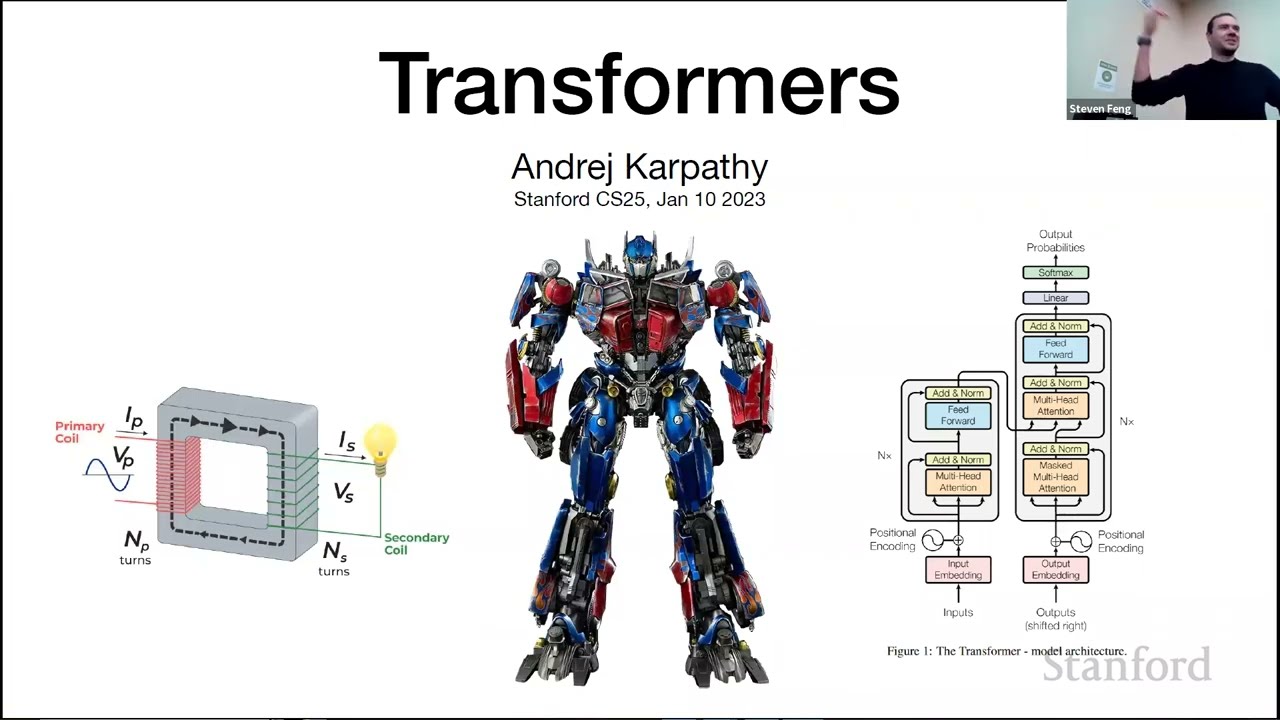

Stanford CS25: V2 I Introduction to Transformers w/ Andrej Karpathy

Stanford Online

1:49:54

1:49:54

Stanford CS230 | Autumn 2025 | Lecture 8: Agents, Prompts, and RAG

Stanford Online

1:45:08

1:45:08

Stanford CS230 | Autumn 2025 | Lecture 9: Career Advice in AI

Stanford Online

1:44:31

1:44:31

Stanford CS229 I Machine Learning I Building Large Language Models (LLMs)

Stanford Online