Advertisement

1:44:31

1:44:31

Stanford CS229 I Machine Learning I Building Large Language Models (LLMs)

Stanford Online

·

May 10, 2026

Open on YouTube

Transcript

0:05

So, let's get started.

0:07

So I'll be talking about

building LLMs today.

0:10

So I think a lot of you have

heard of LLMs before, but just

0:14

as a quick recap.

0:16

LLMs standing for

large language models

0:18

are basically all the

chat bots that you've

0:21

been hearing about recently.

0:22

So, ChatGPT, from OpenAI,

Claude, from Anthropic, Gemini

Advertisement

0:28

and Llama, and other

types of models like this.

0:31

And today we'll be talking

about how do they actually work.

0:34

So it's going to be an overview

because it's only one lecture

0:36

and it's hard to

compress everything.

0:38

But hopefully, I'll

touch a little bit

0:39

about all the components

that are needed

0:41

to train some of these LLMs.

0:43

Also, if you have questions,

please interrupt me

0:46

and ask if you have a question.

0:48

Most likely other people in

the room or on Zoom have other.

Advertisement

0:52

Have the same questions.

0:53

So, please ask.

0:56

Great.

0:56

So what matters

when training LLMs.

1:00

So there are a few key

components that matter.

1:02

One is the architecture.

1:04

So as you probably all

LLMs are neural networks,

1:07

and when you think

about neural networks,

1:09

you have to think about what

architecture you're using.

1:11

And another component,

which is really important

1:13

is the training loss and

the training algorithm.

1:16

So, how you actually train

these models, then it's data.

1:20

So, what do you train

these models on.

1:24

The evaluation,

which is how do you

1:26

know whether you're

actually making progress

1:28

towards the goal of LLMs and

then, the system component.

1:33

So that is like

how do you actually

1:35

make these models run on

modern hardware, which

1:38

is really important because

these models are really large.

1:41

So now more than ever,

systems are actually

1:43

really an important

topic for LLMs.

1:47

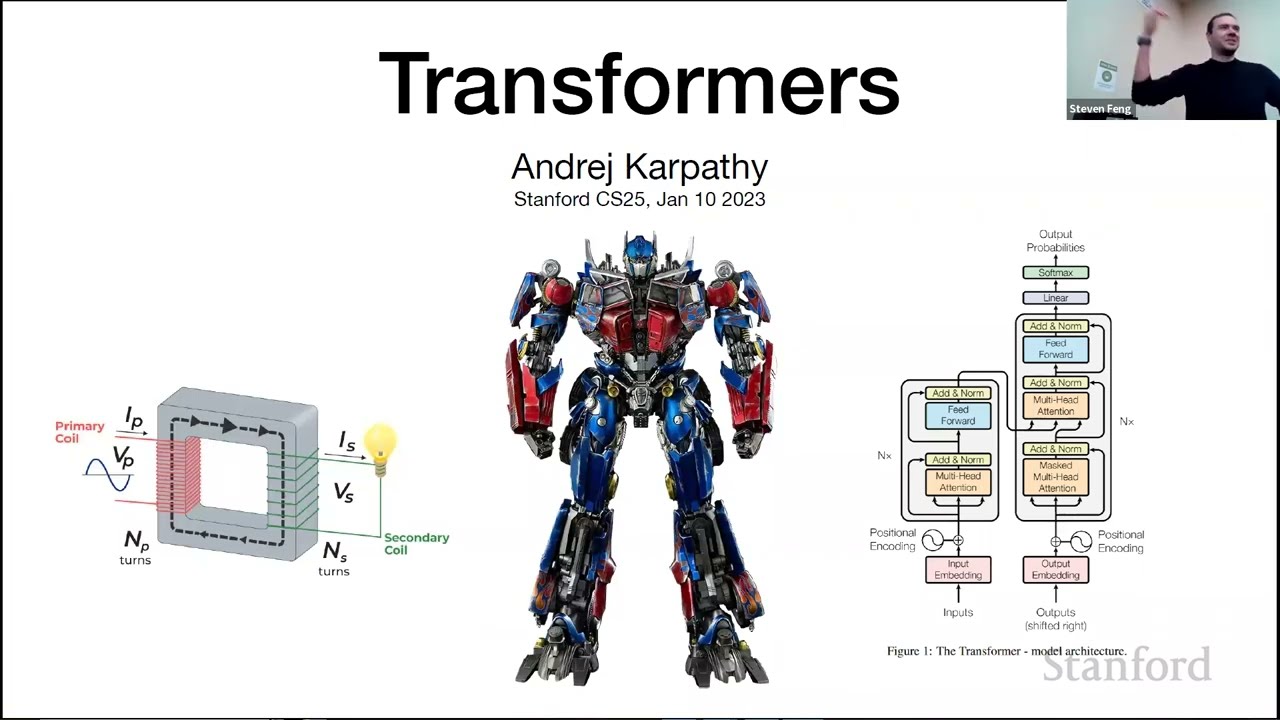

So those five components, you

probably all know that LLMs.

1:52

And if you don't

know LLMs are all

1:53

based on transformers

or at least some version

1:56

of transformers.

1:57

I'm actually not going to talk

about the architecture today.

2:00

One, because I gave a lecture

on transformers a few weeks ago

2:06

and two, because you can find

so much information online

2:09

on transformers.

2:11

There's much less information

about the other four topics.

2:14

So, I really want

to talk about those.

2:17

And another thing to say

is that most of academia

2:20

actually focuses on

architecture and training

2:22

algorithm and

losses as academics

2:25

and I've done that for

a big part of my career,

2:28

is simply we like thinking

that this is like we make

2:32

new architectures,

new models, and it

2:35

seems like it's very important.

2:37

But in reality, honestly, what

matters in practice is mostly

2:39

the three other topics.

2:41

So, data, evaluation and

systems, which is what most

2:45

of industry actually focuses on.

2:48

So, that's also

one of the reasons

2:49

why I don't want to talk too

much about the architecture,

2:52

because really the rest

is super important.

2:55

Great.

2:55

So, overview of

the lecture, I'll

2:57

be talking about pretraining.

2:58

So, pretraining, you

probably heard that word.

3:00

This is the general word.

3:02

This is kind of the classical

language modeling paradigm where

3:06

you basically train your

language model to essentially

3:08

model all of internet.

3:10

And then, there's

a post training,

3:11

which is a more

recent paradigm which

3:13

is taking these

large language models

3:15

and making them

essentially AI assistants.

3:18

So, this is more of a

recent trend since ChatGPT.

3:22

So, if you ever heard

of GPT3 or GPT2,

3:25

that's really pretraining land.

3:27

If you heard of ChatGPT,

which you probably have,

3:29

this is really

post training land,

3:31

so I'll be talking about both,

but I'll start with pretraining

3:34

and specifically

I'll talk about what

3:37

is the task of pretraining LLMs

and what is the loss that people

3:41

actually use.

3:43

So, language modeling,

this is a quick recap.

3:47

Language models at a

high level are simply

3:49

models of probability

distribution over sequences

3:52

of tokens or of words.

3:53

So it's basically

some model of p of x1

3:57

to XL, where x1

is basically what

3:59

one and XL is the last one in

the sequence or in the sentence.

4:04

So, very concretely, if you

have a sentence like the mouse

4:07

ate the cheese, what

the language model gives

4:09

you is simply a probability

of this sentence being uttered

4:13

by a human or

being found online.

4:17

So, if you have another sentence

like "The the mouse ate cheese."

4:21

Here, there's

grammatical mistakes.

4:23

So, the model should

know that this should

4:25

have some syntactic knowledge.

4:27

So, it should know that

this has less likelihood

4:30

of appearing online.

4:32

If you have another sentence

like the cheese ate the mouse,

4:36

then the model should

hopefully know about the fact

4:39

that usually cheese

don't eat mouse.

4:42

So, there's some

semantic knowledge

4:43

and this is less likely

that the first sentence.

4:45

So, this is basically at a high

level what language models are.

4:50

One word that you probably have

been hearing a lot in the news

4:52

are generative models.

4:54

So, this is just something

that can generate.

4:56

Models that can

generate sentences

4:57

or can generate some data.

4:59

The reason why we say language

models are generative models

5:01

is that once you have a

model of a distribution,

5:04

you can simply sample

from this model.

5:06

And now we can generate data.

5:07

So we can generate sentences

using a language model.

5:12

So the type of models that

people are all currently using

5:15

are what we call

autoregressive language models.

5:18

And the key idea of

autoregressive language models

5:21

is that you take this

distribution over words

5:25

and you basically decompose

it into the distribution

5:29

of the first word, multiply

by the distribution of

5:32

or the likelihood of the

distribution of the second word

5:35

given the first

word, and multiply it

5:37

by P of the third word

given the first two words.

5:40

So, there's no

approximation here.

5:42

This is just the chain rule

of probability, which you

5:44

hopefully you all know about.

5:46

Really no approximation.

5:47

This is just one way of

modeling a distribution.

5:50

So, slightly more

concisely, you can write it

5:52

as a product of P's of the next

word, given everything which

5:57

happened in the past.

5:58

So, of the context.

5:59

So, this is what we call

autoregressive language models.

6:02

Again, this is really

not the only way

6:05

of modeling distribution.

6:06

This is just one way.

6:07

It has some benefits

and some downsides.

6:10

One downside of

autoregressive language models

6:12

is that when you actually

sample from this autoregressive

6:15

language model,

you basically have

6:16

a for loop, which generates

the next word, then conditions

6:20

on that next word.

6:21

And then we generate

in other words.

6:23

So, basically if you

have a longer sentence

6:24

that you want to generate, it

takes more time to generate it.

6:28

So, there are some downsides

of this current paradigm,

6:31

but that's what

we currently have.

6:33

So, I'm going to

talk about this one.

6:36

Great.

6:36

So, autoregressive

language models.

6:38

At a high level, what a task of

autoregressive language model

6:41

is simply predicting the

next word, as I just said.

6:44

So, if we have a sentence

like she likely prefers,

6:47

one potential, next

word might be dogs.

6:50

And the way we do it is

that we first tokenize.

6:54

So, you take these words or

subwords you tokenize them

6:58

and then you give an

ID for each token.

7:00

So here you have

one, two, three.

7:03

Then, you pass it

through this black box.

7:04

As I already said,

we're not going

7:06

to talk about the architecture.

7:07

You just pass it through,

pass it through a model,

7:10

and you then get a distribution,

a probability distribution

7:13

over the next word or

over the next token.

7:16

And then you sample

from this distribution,

7:20

you get a new token and

then you detokenize.

7:22

So, you get a new

ID, you detokenize

7:24

and that's how you basically

sample from a language model.

7:28

One thing which is

important to note

7:29

is that the last two

steps are actually

7:32

only needed during inference.

7:34

When you do training,

you just need

7:36

to predict the most likely

token and you can just

7:38

compare to the real token

which happened in practice,

7:41

and then, you basically

change the weights

7:43

of your model to increase

the probability of generating

7:46

that token.

7:49

Great.

7:50

So, autoregressive

neural language models.

7:52

So to be slightly

more specific, still,

7:54

without talking about

the architecture,

7:56

the first thing we do is

that we have all of these.

7:58

Sorry, yes.

7:59

On the previous slide.

8:01

Predicting the probability

of the next token,

8:03

does this mean that your

final output vector has

8:06

to be the same dimensionality

as the number of tokens

8:08

that you have?

8:09

Yes.

8:10

How do you deal with

if you have more token.

8:13

Adding more token

to your [INAUDIBLE]?

8:16

Yeah so we're going to

talk about tokenization

8:18

actually later so you will

get some sense of this.

8:21

You basically can deal

with adding new tokens.

8:24

I'm kind of exaggerating.

8:25

There are methods for doing

it, but essentially people

8:28

don't do it.

8:29

So it's really

important to think about

8:32

how you tokenize your

text, and that's why

8:33

we'll talk about that later.

8:35

But it's a very

good point to note

8:36

is that you basically--

the vocabulary size, so

8:38

the number of tokens that

you have is essentially

8:40

the output of your

language model.

8:43

So it's actually pretty large.

8:46

So autoregressive

neural language models.

8:48

First thing you do is that you

take every word or every token.

8:51

You embed them so you get

some vector representation

8:56

for each of these tokens.

8:58

You pass them through some

neural network, as we said,

9:00

it's a transformer.

9:01

Then you get a representation

for all the word

9:04

and all the words

in the context.

9:06

So it's basically

a representation

9:07

of the entire sentence.

9:09

You pass it through

a linear layer,

9:11

as you just said, to

basically map it to the number

9:15

so that the output--

the number of outputs

9:17

is the number of tokens.

9:19

You then pass it

through some softmax

9:21

and you basically get a

probability distribution

9:24

over the next words given

every word in the context.

9:30

And the last that you

use is basically--

9:32

it's essentially a task of

classifying the next token.

9:35

So it's a very simple, kind

of, machine learning task.

9:37

So you use the

cross-entropy loss.

9:39

Where you basically look at the

actual target that happened,

9:44

which is the target

distribution, which

9:45

is a one hot encoding,

which in this case says,

9:49

I saw the real word

that happened is cat.

9:51

So that's a one hot

distribution over cat.

9:55

And here this is the actual--

9:57

do you see my mouse?

9:58

Oh, yeah.

9:58

This is the distribution

that you generated.

10:00

And basically you

do cross entropy,

10:01

which really just increases the

probability of generating cat

10:04

and decreases all the

probability of generating

10:06

all the other tokens.

10:08

One thing to notice is

that, as you all know again,

10:11

this is just equivalent

to maximizing the text log

10:15

likelihood because

you can just rewrite

10:17

the max over the probability

of this autoregressive language

10:23

modeling task as just being

this minimum of I just

10:26

added the log here

and minus, which

10:29

is just the minimum of the loss,

which is the cross entropy loss.

10:31

So basically

minimizing the loss is

10:33

the same thing as maximizing

the likelihood of your text.

10:36

Any question?

10:37

Questions?

10:43

OK, tokenizer.

10:46

So this is one thing

that people usually

10:49

don't talk that much about.

10:50

Tokenizers are

extremely important.

10:53

So it's really important that

you understand at least what

10:56

they do at a high level.

10:57

So why do we need tokenizers

in the first place?

11:01

First, it's more

general than words.

11:02

So one simple thing

that you might think

11:04

is we're just going to take

every word that we will have.

11:07

You just say every word

is a token in its own.

11:11

But then what happens is if

there's a typo in your word?

11:14

Then you might not have

any token associated

11:17

with this word with a typo.

11:20

And then you don't know

how to actually pass

11:21

this word with a typo into

a large language model.

11:24

So what do you do next?

11:25

And also, even if you think

about words, words is a very--

11:29

words are fine with

Latin-based languages.

11:32

But if you think about

a language like Thai,

11:34

you won't have a simple

way of tokenizing

11:36

by spaces because there are

no spaces between words.

11:39

So really, tokens are much

more general than words.

11:43

It's the first thing.

11:44

Second thing that

you might think

11:45

is that you might tokenize

every sentence, character

11:48

by character.

11:49

You might say A is one

token, B is another token.

11:52

That would actually work

and probably very well.

11:55

The issue is that then your

sequence becomes super long.

11:58

And as you probably

remember from the lecture

12:00

on transformers, the

complexity grows quadratically

12:05

with the length of sequences.

12:06

So you really don't want to

have a super-long sequence.

12:10

So tokenizers basically try to

deal with those two problems

12:14

and give common subsequences

a certain token.

12:19

And usually how you should be

thinking about it is around

12:22

an average of every token

is around 3-4 letters.

12:27

And there are many

algorithms for tokenization.

12:30

I'll just talk about one of them

to give you a high level, which

12:32

is what we call Byte Pair

Encoding, which is actually

12:34

a pretty common.

12:35

One of the two most

common tokenizers.

12:37

And the way that you

train a tokenizer

12:39

is that first you start with

a very large corpus of text.

12:42

And here, I'm really not talking

about training a large language

12:45

model yet, this is purely

for the tokenization step.

12:48

So this is my large corpus of

text with these five words.

12:52

And then you associate

every character

12:55

in this corpus of text

a different token.

12:58

So here, I just split

it up every character

13:00

with a different

token, and I just

13:03

color coded all of those tokens.

13:05

And then what you do is that

you go through your text,

13:08

and every time you see pairs

of tokens that are very common,

13:12

the most common pair of

token, you just merge them.

13:15

So here you see three

times the tokens t and o

13:19

next to each other.

13:20

So you're just going to

say this is a new token.

13:22

And then you continue,

you repeat that.

13:24

So now you have tok, tok

which happens three times.

13:28

Toke with an E that

happens 2 times and token,

13:33

which happens twice, and then

ex which also happens twice.

13:37

So this is the-- if you were to

train a tokenizer on this corpus

13:41

of text, which is

very small, that's

13:43

how you would finish

with a token--

13:45

with like trained tokenizer.

13:47

In reality, you do it on

much larger corpus of text.

13:51

And this is the

real tokenizer of--

13:54

actually, I think this

is GPT3 or ChatGPT.

13:57

And here you see how it would

actually separate these words.

14:00

So basically you

see the same thing

14:01

as what we gave in

the previous example.

14:03

Token becomes its own token.

14:06

So tokenizer is

actually split it up

14:08

into two tokens token and -izer.

14:12

So yeah, that's all

about tokenizers.

14:15

Any questions on that?

14:16

Yeah.

14:16

How do you deal with

spaces, and how do you

14:18

deal with [INAUDIBLE].

14:19

Yeah so actually there's

a step before tokenizers,

14:23

which is what we call

pre-tokenizers, which

14:25

is exactly what you just said.

14:27

So this is mostly--

14:29

in theory, there's no reason to

deal with spaces and punctuation

14:33

separately.

14:34

You could just say every

space gets its own token,

14:37

every punctuation

gets its own token,

14:40

and you can just

do all the merging.

14:42

The problem is that-- so

there's an efficiency question.

14:45

Actually, training these

tokenizers takes a long time.

14:48

So you better-- because you have

to consider every pair of token.

14:51

So what you end up doing is

saying if there's a space,

14:54

this is very--

like pre-tokenizers

14:55

are very English specific.

14:57

You say if there's

a space, we're

14:58

not going to start looking

at the token that came before

15:01

and the token that

came afterwards.

15:03

So you're not merging

in between spaces.

15:06

But this is just like a

computational optimization.

15:10

You could theoretically

just deal with it

15:12

the same way as you deal

with any other character.

15:15

And--

15:15

Yeah.

15:16

When you merge tokens to delete

the tokens that you merged away

15:19

or do you keep the smaller

tokens that emerge?

15:22

You actually keep

the smaller tokens.

15:25

I mean, in reality, it doesn't

matter much because usually

15:29

on a large corpus of text, you

will have actually everything.

15:32

But you usually

keep the small ones.

15:34

And the reason why

you want to do that

15:36

is because if-- in case there's,

as we said before, you have

15:38

some grammatical

mistakes or some typos,

15:41

you still want to

be able to represent

15:43

these words by character.

15:46

So, yeah.

15:47

Yes.

15:48

Are the tokens unique?

15:51

So I mean, say in this case

T-O-K-E-N is there only one

15:54

occurrence or could--

15:56

do you need to leave multiple

occurrence so they could have--

16:00

take on different

meanings or something?

16:02

Oh I see what you say.

16:03

No, it's every token

has its own unique ID.

16:08

So a usual-- this

is a great question.

16:11

For example, if you

think about a bank, which

16:13

could be bank for like

money or bank like water,

16:16

it will have the same token.

16:18

But the model will

learn, the transformer

16:19

will learn that based on the

words that are around it,

16:22

it should associate that--

16:24

I'm saying-- I'm being

very handwavy here,

16:26

but associate that with

a representation that

16:30

is either more like the bank

money side or the bank water

16:33

side.

16:34

But that's a transformer

that does that.

16:36

It's not a tokenizer.

16:38

Yes.

16:39

Yes.

16:39

So you mentioned

during tokenization,

16:41

keep the smaller tokens

you started with, right.

16:43

Like if you start with

a T you keep the T

16:45

and then you build

your tokenize out to

16:47

[INAUDIBLE] allow input token.

16:49

So let's say maybe you didn't

train on token, but in your data

16:53

you are trying to encode token.

16:54

So how does the tokenizer know

to encode it with token or to

16:58

[INAUDIBLE]?

16:59

Yeah.

16:59

The great question.

17:00

You basically when you--

so when you tokenize,

17:02

so that's after training

of the tokenizer

17:04

when you actually

apply the tokenizer

17:06

you basically always

choose the largest token

17:10

that you can apply.

17:11

So if you can do token,

you will never do T,

17:13

you will always do token.

17:15

But there's actually--

so people don't usually

17:18

talk that much about

tokenizers, but there's

17:20

a lot of computational benefits

or computational tricks

17:24

that you can do for making

these things faster.

17:27

So I really don't think

we-- and honestly, I

17:29

think a lot of people think

that we should just get away

17:31

from tokenizers and just

kind of tokenize character

17:34

by character or bytes by bytes.

17:36

But as I said, right now

there's this issue of length,

17:39

but maybe one day, like

in five or 10 years,

17:42

we will have different

architectures

17:43

that don't scale quadratically

with the length of the sequence.

17:46

And maybe we'll move

away from tokenizers.

17:50

So can you share

with us the drawback?

17:53

Why do people want to move

away from the tokenizer?

17:57

Yeah.

17:58

So I think one good

example is math.

18:03

If you think about math,

actually numbers right now

18:06

are not tokenized.

18:07

So for example, 327 might

have its own token, which

18:10

means that models,

when they see numbers,

18:13

they don't see them

the same way as we do.

18:15

And this is very

annoying because I mean,

18:17

the reason why we can

generalize with math

18:19

is because we can deal with

every letter separately

18:22

and we can then do composition.

18:24

Where you know that

basically if you add stuff,

18:26

it's the same thing as

adding every one separately

18:28

plus like whatever

the unit that you add.

18:30

So they can't do that.

18:32

So then you have to do

special tokenization.

18:35

And, like, one of the

big changes that GPT4 did

18:39

is changing the way

that they tokenize code.

18:42

So for example, if you have

code, you know you have often,

18:46

in Python, these four

spaces at the beginning.

18:48

Those were dealt with

strangely before.

18:52

And as a result, like,

the model couldn't really

18:54

understand how to

deal with code.

18:57

So tokenize actually

matter a lot.

19:00

OK, so I'll move on right now,

but we can come back later

19:04

on tokenizers.

19:05

Great.

19:06

So we talked about a task

the loss the tokenizer,

19:08

let's talk a little

bit about evaluation.

19:11

So the way that LLMs

are usually evaluated

19:13

is what we call-- is using

what we call perplexity.

19:16

At a high level it's basically

just your validation loss.

19:20

The slight difference

with perplexity

19:21

is that we use something that

is slightly more interpretable,

19:24

which is that we use the

average per token loss,

19:27

and then you exponentiate it.

19:29

And the reason why

you exponentiate it

19:30

is because you want--

19:32

I mean, the loss has

a log inside and you--

19:35

like one humans

are actually pretty

19:36

bad at thinking in log space.

19:38

But two logs depend

on the base of the log

19:41

while when you exponentiate

you basically have everything

19:44

in the vocabulary size unit.

19:48

And the average per

token is just so

19:50

that your perplexity is

independent of the length

19:52

of your sequence.

19:54

So perplexity is just

two to the power average

19:57

of the loss of the sequence.

20:00

So perplexity is between one

and the length of the vocabulary

20:04

of your tokenizer.

20:05

One it's simply well,

if you predict perfectly

20:08

the thing which every

word, then every word

20:11

will have basically

products of ones.

20:14

So the best perplexity

you can have is one.

20:16

If you really have no

idea, you basically

20:18

predict with one divided

by size of vocabulary

20:22

and then you do simple

math and you basically

20:24

get perplexity of

size of vocabulary.

20:26

So the intuition

of perplexity is

20:28

that it's basically

the number of tokens

20:30

that your model is, kind

of, hesitating between.

20:32

So if your model is perfect,

it doesn't hesitate.

20:35

It know exactly the word.

20:36

If it really has

no idea, then it

20:38

hesitates between all

of the vocabulary.

20:43

So perplexity really improved.

20:46

That's perplexity on a standard

data set between 2017 and 2023.

20:50

It went from a kind of 70

tokens to less than 10 tokens

20:54

over these five, six years.

20:56

So that means that the

models were previously

20:58

stated between 70 words every

time it was generating a word,

21:02

and now it's hesitating

between less than 10 words.

21:05

So that's much better.

21:06

Perplexity is actually

not used anymore

21:08

in academic benchmarking,

mostly because it depends

21:11

on the tokenizer that you use.

21:12

It depends on the actual data

that people are evaluating on.

21:16

But it's still very important

for development of LLMs.

21:19

So when you actually

train your own LLM people

21:21

will still really look

at the perplexity.

21:26

One common other way and

now more common in academia

21:30

of evaluating these LLMs is just

by taking all the classical NLP

21:34

benchmarks, and I'll give you

a few examples later and just,

21:37

kind of, aggregating everything.

21:39

So collect as many automatically

evaluatable benchmarks

21:43

and just evaluate

across all of them.

21:46

So one such-- or

actually two such

21:50

benchmarks are what we call

HELM, which is from Stanford.

21:54

And another one is the

Hugging Face open leaderboard,

21:56

which are probably the two

most common ones right now.

22:00

So just to give you

an idea, in HELM,

22:02

all of these type

of tasks, which

22:04

are mostly things that

can be easily evaluated

22:08

like question answering.

22:09

So think about many different

question answering tasks.

22:13

And the benefit with

question answering

22:15

is that you usually know

what is the real answer.

22:18

So you can-- the way that

you evaluate these models

22:20

and I'll give you a concrete

example in one second,

22:22

is that you can just look at

how likely the language model is

22:26

to generate the real answer

compared to some other answers.

22:30

And that's essentially,

at a high level,

22:31

how you evaluate these models.

22:33

So to give you a

specific example,

22:35

MMLU is probably the most common

academic benchmark for LLMs.

22:42

And this is just a

collection of many question

22:45

and answers in all

of those domains.

22:47

For example, college

medicine, college physics,

22:50

astronomy and these

type of topics.

22:52

And the questions are things

like, so this is in astronomy.

22:55

What is true for

type-1a supernova?

22:58

Then you give four

different potential answers

23:01

and you just ask the model

which one is more likely.

23:04

So there are many

different ways of doing it.

23:06

Either you can look at the

likelihood of generating

23:09

all these answers, or

you can ask the model

23:11

which one is the most likely.

23:12

So there are different ways

that you can prompt the model,

23:15

but at a high level, you

know which one is correct.

23:17

And there are three

other mistakes.

23:20

Yes.

23:22

Creating unconstrained

text as an output.

23:24

Yeah.

23:25

How do you evaluate

a model if it

23:28

gives something that's

semantically completely

23:31

identical, but is not the

exact tokens that you expect?

23:35

Yeah.

23:36

So that's a great question.

23:37

I'll talk more about that later.

23:38

Here, in this case, we

don't do unconstrained.

23:41

So the way you would evaluate

MMLU is basically either

23:44

you ask the first

question, and then you

23:47

look at the likelihood of

the model generating A,

23:50

the likelihood of the model

generating B, C, and D

23:53

and you look at which

one is the most likely.

23:55

Or you can ask the

model out of A, B, C, D,

23:58

which one is the most likely.

23:59

And you look at whether the

most likely next token is A, B,

24:03

C, or D. So you

constrain the model

24:05

to say it can only

answer these four things.

24:09

You say you constraint--

24:10

Yeah.

24:11

You constrain the

prompt or do you

24:13

mean of its whole

probability distribution

24:15

that it outputs

you only comparing

24:17

the outputs of like-- you're

only comparing the A token the

24:19

[INAUDIBLE].

24:20

Yeah.

24:20

So in the second case I gave

you, you would do exactly the--

24:24

actually would do both.

24:25

You would prompt the

model saying A, B, C, or D

24:27

plus you would constrain to

only look at these four tokens.

24:32

In the first case, you don't

even need to generate anything.

24:34

So in the first case,

you literally just

24:36

look, given it's

a language model,

24:38

it can give a distribution

over sentences.

24:40

You just look at what is

the likelihood of generating

24:43

all of these words?

24:45

What is the likelihood of

generating the second choice?

24:48

And you just look at whether the

most likely sentence is actually

24:52

the real answer.

24:54

So you don't actually

sample from it,

24:56

you really just

use P of X1 to XL.

24:59

Does that make sense?

25:01

That being said, evaluation

of open-ended questions

25:05

is something we're going

to talk about later,

25:06

and it's actually

really important

25:08

and really challenging.

25:09

Yes.

25:10

Earlier you mentioned

[INAUDIBLE] metrics

25:13

like perplexity

are not I usually

25:16

use because it

depends on how you do

25:18

your tokenization,

some design choices.

25:21

I was wondering if you

could speak more to that.

25:24

Yeah.

25:25

So think about perplexity.

25:26

I told you perplexity is

between 1 and vocabulary size.

25:30

So now imagine that ChatGPT

uses a tokenizer that has 10,000

25:34

tokens but Gemini from Google

uses a tokenizer that had

25:38

100,000 potential tokens.

25:41

Then actually the Gemini one

will have the upper bound

25:45

of the perplexity that you can

get is actually worse for Gemini

25:48

than for ChatGPT.

25:50

Does that make sense?

25:52

So that's just an idea.

25:53

It's actually a little bit

more complicated than that,

25:55

but that's just one

festival with a bit

25:58

of where you can see that the

tokenizer actually matters.

26:02

Great.

26:05

OK, so evaluation challenges.

26:07

There are many.

26:08

I'll just talk about

two really briefly.

26:10

One, as I told you, there are

two ways of doing evaluation

26:13

for these MMLUs.

26:14

Actually, there are

many more than two

26:16

but I gave you two examples.

26:17

And it happens that

for a long time,

26:20

even though that was a

very classical benchmark

26:22

that everyone uses actually

different companies

26:27

and different

organizations were actually

26:32

using different ways

of evaluating MMLU.

26:34

And as a result, you get

completely different results.

26:37

For example, Llama-65b, which

was the first model of meta

26:42

in the llama series, had

on HELM 63.7 accuracy

26:47

but on this other

benchmark had like 48.8.

26:53

So really the way that you

evaluate, and this is not even

26:55

talking about prompting

this is really just the way

26:58

that you evaluate the models.

27:01

Prompting is another issue.

27:02

So really, there are a

lot of inconsistencies.

27:04

It's not as easy as it looks.

27:07

First thing.

27:08

Yeah, sorry.

27:08

How can we make sure

that all these models

27:10

are trained on the benchmark?

27:13

Second thing.

27:14

This is a great question.

27:15

Train test contamination.

27:17

This is something

which I would say

27:19

is really important

in academia in--

27:24

given that the talk is mostly

about training large language

27:26

models, for companies, it's

maybe not that important

27:29

because they know

what they trained on.

27:33

For us, we have no idea.

27:35

So, for us, it's a real problem.

27:37

So there are many

different ways of trying

27:39

to test whether the test set--

27:42

or sorry, whether the

test set was actually

27:44

in the training set.

27:45

One, kind of, cute trick

that people in the lab,

27:51

in [? Tatsuo's ?] lab have

found, is that what you can do

27:54

is that given that most

of the data set online

27:57

are not randomized,

you can just look at--

28:00

and that language models,

what they do is just

28:02

predict the next word.

28:03

You can just look at

the entire test set.

28:06

What if you generate

all the examples

28:09

in order versus all the

examples in a different order.

28:13

And if it's more likely to

generate a thing in order, given

28:17

that there's no

real order there,

28:19

then it means that probably

it was in the training set.

28:21

Does that make sense?

28:23

So there are many--

that's like one of them.

28:24

There are many other

ways of doing it.

28:26

Train test

contamination, again, not

28:28

that important for development,

really important for

28:30

academic benchmarking.

28:33

Great.

28:33

So there are many

other challenges,

28:34

but I'll move on for now.

28:37

Great.

28:38

Data.

28:40

So data is another

really big topic.

28:43

At a high level people

just say you basically

28:45

train large language

models on all of internet.

28:48

What does that even mean?

28:50

So people sometimes say,

well, of clean internet,

28:53

which is even less defined.

28:55

So internet is very dirty

and really not representative

28:59

of what we want in practice.

29:00

If I download a random

website right now,

29:03

you would be shocked

at what is in there.

29:06

It's definitely

not your Wikipedia.

29:08

So I'll go really briefly

on what people do.

29:14

I can answer some

questions, but I mean,

29:16

data is on its own

it's a huge topic.

29:19

Basically, first what you do

is download all of internet.

29:22

What that means is that

you use web crawlers that

29:25

will go on every web page, on

internet or every web page that

29:29

is on Google.

29:31

And that is around 250

billion pages right now.

29:36

And that's around

1 petabyte of data.

29:39

So this is actually a Common

Crawl is one web crawler.

29:42

So people don't usually

write their own web crawlers

29:45

what they do is that they

use standard web crawlers,

29:47

and Common Crawl is one of them

that basically every month adds

29:51

all the new websites that were

added on internet that are found

29:56

by Google, and they put it in

a big basically a big data set.

30:00

So that's-- on Common Crawl, you

have around 250 billion pages

30:04

right now.

30:04

So 1E6 gigabytes of data.

30:07

Once you have this--

30:09

so this is a random web page.

30:11

Like literally random

from this Common Crawl.

30:14

And what you see is

that one, it really

30:16

doesn't look at type of things

that you would usually see,

30:18

but actually-- so

this is an HTML page.

30:21

It's hard to see, but

if you look through

30:24

will see some content.

30:26

For example, here,

Test King World

30:30

is your ultimate source for

the system x high performance

30:33

server.

30:34

And then you have three dots.

30:35

So you don't even-- the

sentence is not even finished.

30:37

That's how random

internet looks like.

30:40

So, of course, it's

not that useful

30:42

if you just train a

large language model

30:44

to generate things like this.

30:45

So what are some of the

steps that are needed?

30:48

First one, you extract

the text from the HTML.

30:51

So that's what I just

tried to do by looking

30:53

at basically the correct tags.

30:55

There are a lot of

challenges through this.

30:57

For example, extracting

math is actually

30:59

very complicated, but pretty

important for training

31:02

large language models.

31:03

Or for example, boilerplates.

31:05

A lot of your forums will

have the same type of headers,

31:08

the same type of footers.

31:10

You don't want to repeat

all of this in your data,

31:13

and then you will filter

undesirable content.

31:16

So not safe for work,

harmful content, PII.

31:20

So usually every

company has basically

31:22

a blacklist of websites

that they don't

31:26

want to train their models on.

31:27

That blacklist is very

long and you basically

31:30

say if it comes from there,

we don't train on this.

31:32

There are other ways

of doing these things.

31:34

Is that you can train a small

model for classifying what

31:36

is PII, removing these things.

31:39

It's hard.

31:40

Every point here that

I'm going to show you

31:42

is a hard amount of

work, but I'm just

31:46

going to go quickly through it.

31:48

So filter undesirable content.

31:50

Second or fourth

is de-duplication.

31:54

As I said, you might have

things like headers and footers

31:57

in forums that are

always the same.

31:59

You want to remove that.

32:01

Another thing that

you might have

32:02

is a lot of URLs that are

different, but actually show

32:05

the same website.

32:08

And you might also have a lot of

paragraphs that come from common

32:13

books that are basically

de-duplicated 1,000 times

32:16

or 10,000 times on internet.

32:18

So you have to de-duplicated.

32:20

Also very challenging because

you have to do that at scale.

32:24

Once you do the

de-duplication, you

32:26

will do some

heuristic filtering.

32:28

You will try to remove

low-quality documents.

32:31

The way you do that are things

like rules-based filtering.

32:35

For example, if you see that

there are some outlier tokens.

32:37

If the distribution of

tokens in the website

32:39

is very different than the

usual distribution of tokens,

32:42

then it's probably some outlier.

32:43

If you see that the length

of the words in this website

32:46

is super long, there's something

strange going on that website.

32:49

If you see that the website

has only three words,

32:52

maybe, is it worth

training on it.

32:54

Maybe not.

32:54

If it has 10 million words,

maybe there's something also

32:58

wrong going on that page.

33:00

So a lot of rules like this.

33:01

Yes.

33:02

Why do we filter out

undesirable content

33:04

from our data set instead

of putting it in as,

33:08

like, a supervised loss?

33:10

Can we not just say, here's

this like, hate speech website,

33:14

let's actively try to--

33:17

let's actively penalize

the model for getting it.

33:19

We'll do exactly that,

but not at this step.

33:22

That's why the post-training

will come from.

33:25

Pretraining the

idea is just to say

33:30

I want to model, kind of, how

humans speak, essentially.

33:34

And I want to remove all

these headers, footers

33:36

and menus and things like this.

33:38

But it's a very good

idea that you just had.

33:41

And that's exactly

what we'll do later.

33:45

Next step,

model-based filtering.

33:47

So once you filter a lot

of data, what you will do--

33:50

that's actually a

very cute trick.

33:51

You will take all

of Wikipedia and you

33:54

will look at all

the links that are

33:56

linked through Wikipedia pages.

33:58

Because probably if something

is referenced by Wikipedia,

34:01

it's probably some

high-quality website.

34:02

And you will train a classifier

to predict whether something

34:07

comes from-- whether a

document comes from one

34:10

of these references

from Wikipedia

34:13

or whether it's

from the random web.

34:15

And you will try

to basically say,

34:17

I want more of the things that

come from Wikipedia references.

34:21

Does that make sense?

34:23

So yeah.

34:24

So you will train a

machine learning model.

34:26

Usually also very simple

models because you

34:28

need to do that really at scale.

34:30

I mean, just think about

the 250 billion pages.

34:34

Next one, you will try

to classify your data

34:37

into different domains.

34:41

You will say, OK, this is

entertainment, this is books,

34:43

this is code, this is like

these type of domains.

34:46

And then you will try to

either up or down weight

34:51

some of the domains.

34:52

For example, you might say--

34:54

you might see that actually if

you train more on code, then

34:57

actually your model becomes

better on reasoning.

34:59

So that's something that

people usually say in

35:01

a very hand-wavy way.

35:02

If you train your

model more on code,

35:04

actually it helps reasoning.

35:05

So you want to update

the coding distribution

35:08

because that helps for general

language modeling skills.

35:11

Books is usually also another

one that people usually update.

35:16

Entertainment, they

usually down weight.

35:18

So things like this.

35:19

Of course, you want to do it--

so people used to do it, maybe

35:24

kind of heuristically.

35:25

Now there's entire

pipelines that we'll

35:27

talk about of how to do

these things slightly

35:30

more automatically.

35:33

And then at the end of

training, you usually train--

35:37

after training on all

of this data that we saw

35:40

you usually train on

very high quality data

35:42

at the end of training your

large language model where you

35:46

decrease your learning rate.

35:47

And that basically

means that you're,

35:49

kind of, overfitting your model

on a very high quality data.

35:52

So usually what you

do there is Wikipedia.

35:55

You basically

overfit on Wikipedia

35:57

and you overfit on, like,

human data that was collected.

36:04

The other thing is like

continual pretraining

36:06

for getting longer context.

36:07

I'm going to skip over

all of these things.

36:09

But that's just to give

you a sense of how hard it

36:12

is when people just say I'm

going to train on internet,

36:15

that's a lot of work.

36:17

And, really, we haven't

figured it out yet.

36:19

So collecting well

data is a huge part

36:23

of practical, large

language model.

36:24

Some might say that

it's actually the key.

36:26

Yes.

36:27

[INAUDIBLE] about data.

36:29

So basic question.

36:30

So usually when you start

with like a petabyte of data,

36:33

after you go through

all the steps,

36:35

what's the typical amount

of data you have remaining.

36:37

And then how large a

team does it typically

36:40

take to go through all the

data steps you talked about?

36:43

Sorry how la-- is your

question how large

36:45

is the data after you filter?

36:46

Yeah.

36:47

After you filter and then

you go through all the steps.

36:49

How large a team do you

need to go through, like,

36:52

all the filtration

steps you mentioned.

36:54

How slow is it or--

36:56

How many people

would you need to be

37:00

able to do this [INAUDIBLE]?

37:02

OK that's a great question.

37:03

I'm going to somewhat

answer about the data.

37:06

How large is the data set

at the end of this slide.

37:10

For number of people that work

on it, that's a good question.

37:15

I'm actually not quite

sure, but I would say, yeah,

37:19

I actually don't

quite know but I

37:22

would say it's probably even

bigger than the number of people

37:25

that work on the tuning of

the pretraining of the model.

37:29

So the data is bigger

than the modeling aspect.

37:34

Yeah, I don't think

I have a good sense.

37:37

I would say probably in LLAMA's

team, which have 70-ish people,

37:41

I would say maybe

15 work on data.

37:45

Yeah.

37:46

All these things, you don't

need that many people,

37:48

you need a lot of compute also.

37:49

Because for data you

need a lot of CPUs.

37:52

So, yeah.

37:53

And I'll answer

the second question

37:54

at the end of this slide.

37:56

So as I just, kind

of, alluded to really,

37:59

we haven't solved data

at all for pretraining.

38:02

So there's a lot of research

that has to be done.

38:04

First, how do you process

these things super efficiently?

38:07

Second, how do you

balance kind of all

38:09

of these different domains?

38:10

Can you do synthetic

data generation?

38:12

That's actually a

big one right now.

38:14

And because we don't have--

38:16

we'll talk about that

later, but we don't have

38:18

enough data on the internet.

38:20

Can you use multimodal data

instead of just text data?

38:23

And how does that improve

even your text performance?

38:28

There's a lot of secrecy

because, really, this

38:30

is the key of most of the

pretraining large language

38:33

models.

38:34

So for competitive dynamics,

usually these companies

38:39

don't talk about how they

do the data collection.

38:41

And also there's a

copyright liability issue.

38:44

They definitely don't

want to tell you

38:45

that they've trained on

books even though they did

38:47

because if not can sue them.

38:50

Common academic benchmarks.

38:52

So that will, kind of,

answer what you asked.

38:54

It started-- so those

are the smaller ones.

38:57

The names are not

that important,

38:58

but it started from around

$150 billion tokens, which are

39:02

around 800 gigabytes of data.

39:04

And now it's around

15 trillion--

39:06

15 trillion tokens,

which is also

39:09

the size of the models that

are-- right now the best models

39:12

are probably trained

on that amount of data.

39:14

So 15 trillion tokens,

which is probably,

39:18

I guess, two orders of

magnitude bigger than that.

39:20

So 80E3 gigabyte.

39:23

So that would be around 100

to 1,000 times filtering

39:29

of the Common Crawl,

if I'm not mistaken.

39:32

So, yeah.

39:34

One very famous one is the Pile.

39:37

So this is an academic

benchmark, the Pile.

39:39

And we can just look at what

distribution of data they have.

39:42

It's things like

archive, PubMed Central,

39:46

which is all the biology stuff.

39:50

Here it's Wikipedia, you see

Stack Exchange, some GitHub

39:55

and some books and

things like this.

39:58

Again, this is on

the smaller side.

39:59

So this is-- if we look at here,

this is on 280B so, in reality,

40:03

it's like 100 times bigger

so you cannot have that much

40:05

of GitHub and of Wikipedia.

40:09

In terms of closed

source models.

40:11

Just to give you

an idea, Llama 2

40:14

it was trained on

2 trillion tokens,

40:16

Llama 3 15 trillion

tokens, which is currently

40:19

the best model that we know

on how much it was trained on,

40:22

which is the same thing as is

the best academic or the biggest

40:26

academic benchmark, which

is 15 trillion tokens.

40:29

GPT4 we don't really

but it's probably

40:31

in the same order of magnitude

or it's probably around that.

40:33

Actually, it's probably

around 13 from leaks.

40:36

If the leaks are true.

40:39

Great.

40:41

So scaling laws.

40:43

Any other questions on data

before we go to scaling laws?

40:48

Sorry I know I'm giving

you a lot of information,

40:51

but there's a lot into

training, large language models.

40:54

Great scaling laws.

40:56

So the idea is that what people

saw around 2020, or at least

41:01

from a long time, but they've

been able to theoretically show

41:05

it or empirically

show it since 2020,

41:07

is that the more data

you train your models on

41:09

and the larger the models,

the better the performance.

41:12

This is actually pretty

different than what

41:14

you've seen in this class.

41:15

In this class we teach

you about overfitting.

41:17

Overfitting doesn't happen

with large language models.

41:20

Larger models,

better performance.

41:23

It's something that

really took a long time

41:25

for the community who took

this type of class to realize.

41:29

But for the exam,

overfitting exists.

41:33

So, OK, the idea of scaling loss

is that if-- given that more

41:38

data and larger

models will always

41:40

give you better

performance, can we

41:42

predict how much better

your performance will

41:46

be if you increase the amount of

data and the size of your model?

41:50

And surprisingly, it works.

41:52

So here you see three plots

from a very famous paper called

41:55

Scaling Laws from OpenAI.

41:57

Here you see on

the x-axis compute.

42:00

So how much did you train--

42:01

like, how much compute did

you spend for training?

42:04

And here you see test loss.

42:05

So this is essentially,

I mean, perplexity,

42:08

but it's your validation loss.

42:09

So it's a log of the perplexity.

42:11

And if you put these

two on log scale,

42:15

then you see that the

performance or the--

42:19

sorry, the scaling

law is linear.

42:22

That means that if you

increase your compute

42:25

by a certain amount, you can say

by how much your test loss will

42:29

actually decrease.

42:30

Same thing with data and

same thing for parameters.

42:33

If you increase

the data set size,

42:35

your loss will

decrease by an amount

42:38

that is somewhat predictable.

42:40

If you increase the

number of parameters,

42:42

the loss will

decrease by an amount,

42:44

which is somewhat predictable.

42:45

This is really amazing.

42:47

Very surprising.

42:49

I mean, it looks innocuous when

you look at these type of plots,

42:52

but that's crazy because it

means that you can predict

42:55

how well we're going to

perform in two or three years,

42:58

depending on how much

compute we will add,

42:59

assuming that these

things will hold.

43:01

There's nothing

theoretical about it.

43:04

Yes.

43:05

Two things.

43:06

One, what is the loss

that they're using here.

43:08

Is this perplexity?

43:09

So it's-- I said perplexity was

like 2 to the power of the loss.

43:13

So this is the power

of the perplexity.

43:17

And then the second

thing is, when

43:19

you increase the

number of parameters

43:21

or you increase the data

set size [INAUDIBLE] data

43:24

[INAUDIBLE] times, doesn't

that just inherently

43:26

increase your compute?

43:27

Like does all of this

[INAUDIBLE] come to just how

43:30

[INAUDIBLE] you [INAUDIBLE]?

43:31

Yes.

43:31

--or something

specific [INAUDIBLE]?

43:32

No, this is a great question.

43:33

So the compute here is actually

a factor of two things, the data

43:37

and the parameter.

43:38

What I'm showing here

is that you can--

43:40

well, actually, we're going

to talk about that in details.

43:42

But basically, if you increase

the number of parameters,

43:44

you should increase the

number of data that you have.

43:48

So you actually don't

go multiple times

43:50

to the same data set.

43:51

No one does epochs

in at least not yet

43:56

because we haven't still

kind of enough data.

43:59

So yeah, this is

all the same trend,

44:01

which is increase

compute decrease loss.

44:04

Yes.

44:06

Have we seen the numbers for

the last two years or this

44:09

is still holding?

44:10

It is still holding.

44:13

I don't have good

numbers to show you,

44:16

but it is still

holding, surprisingly.

44:20

Yes.

44:21

Is there no evidence that

control quality density

44:23

will ever plateau?

44:25

In theory, we would expect

it plateau, [INAUDIBLE]?

44:28

No empirical evidence of

plateauing anytime soon.

44:33

Why?

44:34

We don't know.

44:35

Will it happen?

44:37

Probably.

44:37

I mean, it doesn't need

to because it's actually

44:39

in log scale.

44:40

So it's not like

as if it had to go.

44:43

It had to plateau.

44:44

Like mathematically, it could

continue decreasing like this.

44:47

I mean, most people think

that it will probably

44:49

plateau at some point.

44:50

We don't know when.

44:54

So that's-- I'll talk more

about scaling laws now.

44:57

So why are scaling

laws really cool?

44:59

Imagine that I gave you--

45:02

you're very fortunate I gave

you 10,000 GPUs for this month.

45:05

What model will you train?

45:07

How do you even go about

answering that question?

45:09

And I mean, this

is a hypothetical,

45:12

but that's exactly what these

companies are faced with.

45:16

The old pipeline,

which was basically

45:19

tune hyperparameters

on the big models.

45:21

So let's say I have

30 days, I will train

45:24

30 models for one day each.

45:26

I will pick the best one and

that will be the final model

45:30

that I will use in production.

45:32

That means that the model

that I actually used

45:34

was only trained for one day.

45:36

The new pipeline is that you

first find a scaling recipe.

45:40

So you find something that

tells you, for example,

45:43

like one common thing

is that if you increase

45:45

the size of your model, you

should decrease your learning

45:46

rate.

45:47

So you find a

scaling recipe such

45:49

that you know if I increase

the size of my model,

45:52

here's what I should do

with some hyperparameters.

45:55

Then you tune your

hyperparameters

45:57

on smaller models

of different sizes.

46:00

Let's say I will say for

three days, of my 30 days,

46:03

I will train many

different models.

46:05

And I will do

hyperparameter tuning

46:07

on these small models,

each of different sizes.

46:09

Then I will fit a

scaling law and try

46:11

to extrapolate from these

smaller models, which

46:15

one will be the best if I

train it for much longer--

46:20

or sorry if I train

it for a larger model.

46:22

And then I will train

the final huge model

46:24

for 27 days instead

of just one day.

46:28

So the new pipeline

is not train things

46:31

or do hyperparameter tuning

on the real scale of the model

46:34

that you're going

to use in practice,

46:35

but do things on smaller

ones at different scales.

46:39

Try to predict how

well they will perform

46:41

once you make them bigger.

46:43

I will give-- I will give you a

very concrete example right now.

46:46

Let's say transformers

versus LSTMs.

46:49

Let's say you have

these 10,000 GPUs,

46:51

you are not sure which

one you should be using.

46:53

Should I be using a

transformer-based model

46:55

or LSTM-based model.

46:56

What I will do is I

will train transformers

46:58

at different scales.

47:00

So here you see different

parameters on the x-axis,

47:02

y-axis is my test source.

47:04

I will then train different

LSTMs at different scales.

47:08

Once I have these points,

I will see oh it, kind of,

47:11

fits a scaling law.

47:12

I will fit my

scaling law and then

47:14

I will be able to predict if

I had 10 times more compute,

47:18

here's how well I would

perform for the LSTM.

47:21

It's actually slightly

less linear for the LSTM,

47:23

but you can probably try to

predict where you would end up.

47:26

And clearly from this

plot, you would see

47:28

that transformers are better.

47:30

One thing to notice when you

read these type of scaling laws

47:33

is that there are two

things that are important.

47:35

One is really your

scaling rate, which

47:40

is the slope of the-- the

slope of the scaling law.

47:45

The other thing

is your intercept,

47:49

you could start

worse, but actually

47:52

become better over time.

47:53

It just happens that

LSTMs are worse for both.

47:55

But I could show you

another one where things--

47:58

you can predict that actually

after a certain scale

48:01

you're better off using that

type of model than others.

48:04

So that's why scaling laws

are actually really useful.

48:08

Any questions on that?

48:12

Yeah.

48:12

So these are all,

kind of, very--

48:15

how sensitive are these to small

differences in the architecture.

48:18

Like one like

transformer architecture

48:21

versus another

transformer architecture.

48:23

Do you think we have

to fit your own curve

48:26

and, basically, say like oh

scaling laws tell me this should

48:28

be some logarithmic function.

48:31

Like, let me

extrapolate that for

48:33

my own specific architecture.

48:35

Yeah, so usually, for

example, if you're an academic

48:38

and you want to-- now at

least that's pretty recent

48:40

and you want to propose

a new activation.

48:43

That's exactly what you will do.

48:45

You will fit a scaling law,

show another scaling law

48:47

with the standard

like, I don't GELU

48:49

and you will say

that it's better.

48:50

In reality, once you start

thinking about it in scaling

48:53

laws terms, you really

realize that actually

48:55

all the architecture

differences that we

48:57

can make, like the small,

minor ones, all they do

48:59

is maybe change a little

bit the intercept.

49:03

But really that doesn't

matter because just

49:05

train it for 10 hours longer or

like wait for the next computer

49:09

GPUs and these things

are really secondary.

49:12

Which is exactly why I was

telling you originally,

49:14

people spend too much time on

the architecture and losses.

49:17

In reality, these things

don't matter as much.

49:19

Data though.

49:19

If you use good data, you will

have much better scaling laws

49:23

than if you use bad data.

49:24

So that really matters.

49:27

Another really cool thing

you can do with scaling laws

49:29

is that you can ask yourself,

how to optimally allocate

49:33

training resources.

49:35

Should I train larger models.

49:37

Because we saw that it's better

when you train larger models,

49:39

but we saw that it's also

better when you use more data.

49:42

So which one should I do?

49:43

Should I just train on

more data, a smaller model,

49:46

or should I train a

larger model on less data?

49:49

So Chinchilla is a very famous

paper that first showed this.

49:53

The way they did it,

I want to give you

49:55

a little bit of a sense

of what these plots are.

49:58

Here you see training

loss again on the x-axis,

50:00

you see parameter differences,

sorry, parameter size--

50:04

number of parameters.

50:04

So the size of the model.

50:06

And here all these

curves are what

50:07

we call ISO flops, which is that

all the models on this curve

50:13

have been trained with the

same amount of compute.

50:17

The way that you do

that is that you train--

50:19

you change.

50:20

Sorry, you vary the number of

tokens that were trained on

50:22

and the size of the models,

but you vary in such a way

50:25

that the total compute

is constant, OK.

50:27

So all these curves that you

see with different colors

50:29

have different amount of

compute that were trained on.

50:32

Then you take the best one

for each of those curves.

50:35

Once you have the best one

for each of those curves,

50:38

you can ask-- you can

plot how much flops it was

50:44

and which curve were you

on and how much parameters

50:47

did you actually use for

training that specific point.

50:50

You put that on the log

log scale again and now

50:55

you fit a scaling law again.

50:56

So now I have something

which tells me

50:59

if I want to train a model of 10

to the power 23 flops, here is

51:03

exactly the number of parameters

that I should be using.

51:06

100 B.

51:07

And you can do the same

thing with flops and tokens.

51:11

So now you can predict--

51:13

if I tell you exactly I

have one month of compute,

51:16

what size of model

should I be training?

51:18

Fit the scaling

law, and I tell you.

51:21

Of course that all

looks beautiful.

51:23

In reality like there's a

lot of small things of like,

51:26

should you be counting,

like, embedding parameters,

51:29

there's a lot of complexities.

51:30

But if you do things well,

these things actually do hold.

51:35

So the optimal number of

parameters that Chinchilla paper

51:38

have found is to use 20

tokens for every parameter

51:42

that you train.

51:44

So if you add one

more parameter,

51:45

you should train your thing on--

your model on 20 more tokens.

51:49

So one caveat here is that this

is optimal training resources.

51:53

So that is telling me if you

have 10 to the power, 23 flops

51:57